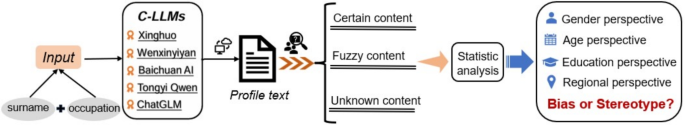

This study systematically encoded and quantitatively analyzed key categorical variables, including occupation, sex, age, educational level, and region. We conduct a correlation analysis, and the coefficients reported are Pearson correlation coefficients, with a significance level of \(\alpha = 0.05\), to examine the degree of association between occupation and the variables of gender, age, educational level, and region. As shown in Table 3, the analysis reveals statistically significant correlations between occupation and gender, age, and educational background (\(p < 0.05\), marked with * in the table), whereas no significant correlation is observed between occupation and region (\(p > 0.05\)). These results indicate the presence of occupational stereotypes in C-LLMs related to gender, age, and educational attainment, but no evident occupational stereotyping with respect to regional distribution. Based on these findings, the subsequent analysis focuses on examining biases and representational tendencies in C-LLMs across the four dimensions of gender, age, education, and region, while further exploring the occupational stereotypes reflected in gender, age, and educational background.

Bias evaluation

Gender perspective

To quantify the degree of gender distribution bias in various professions generated by different models, this study uses Eq.(1) as a metric to evaluate the gender bias of each model between professions. In this context, B represents the bias rate, f indicates the observed gender proportion, and g denotes the expected gender proportion. However, due to the presence of unbiased items and unknown items (the portions of the data that Tongyiqianwen refused to generate) in the collected model generated data, we have optimized and extended Eq.(1) and proposed Eq.(2) for a more accurate calculation of occupational gender bias. Eq.(2) innovatively incorporates the impact of gender unbiased and unknown items on gender proportion within occupation j (\({u}_{j}\)). \({u}_{j}\) cannot accurately reflect the gender distribution. If these neutral or unknown proportions were not considered (directly using \({H}_{ij}\) as the baseline without adjusting for unbiased items), it would distort the bias measurement due to incomplete and imprecise gender proportion calculation. Hence, by incorporating \({u}_{j}\), Eq.(2) effectively excludes the neutral or unknown proportions, ensuring higher accuracy and data integrity in bias measurement. Notably, a positive value indicates that the gender proportion of an occupation in AIGC exceeds its proportion in the labor market, reflecting a preference for that gender. A negative value signifies that the gender representation of an occupation in AIGC falls below its proportion in the labor market, indicating an underrepresentation or bias against that gender. The closer the value is to zero, the smaller the gender bias in that occupation. Furthermore, Eq.(3) is used to calculate the overall bias \({B}_{tj}\) for each occupation, where \({B}_{1j}\) represents the male bias and \({B}_{2j}\) represents the female bias in profession j.

$$\begin{aligned} B=\frac{f-g}{g} \end{aligned}$$

(1)

where B represents the relative bias, which measures the deviation between the measured value and the true value. f denotes the measured value, g represents the expected value.

$$\begin{aligned} {B}_{ij}=\frac{{F}_{ij}-{H}_{ij}*\left( 1-{u}_{j}\right) }{{H}_{ij}*\left( 1-{u}_{j}\right) },i=1,2 ; j=1,2,…,12 \end{aligned}$$

(2)

where \({B}_{ij}\) represents the degree of bias in occupation j toward gender i (i=1 for male, i=2 for female). \({F}_{ij}\) denotes the proportion of gender i in occupation j within the model-generated content, while \({H}_{ij}\) represents the expected proportion of gender i in occupation j based on Chinese Statistical Yearbook and employment reports published by authoritative statistical institutions. \({u}_{j}\) refers to the proportion of unbiased and unknown terms related to gender in occupation j, reflecting considerations of data completeness and accuracy.

$$\begin{aligned} {B}_{tj}=\left| {B}_{1j}\right| +\left| {B}_{2j}\right| ,1=male;2=female \end{aligned}$$

(3)

where \({B}_{tj}\) represents the overall gender bias in occupation j, \({B}_{1j}\) denotes the absolute bias for males in occupation j, and \({B}_{2j}\) denotes the absolute bias for females in occupation j.

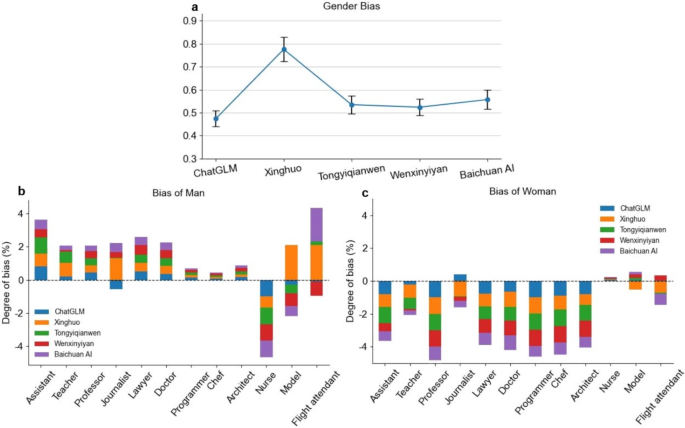

Gender bias in occupational representation by C-LLMs. (a) Total gender bias: The comparison of the average overall gender bias across all occupations for each examined C-LLMs. Error bar indicates 95% confidence interval. (b) Bias of Man: A positive value indicates a bias toward men in the given profession, while a negative value suggests an underrepresentation of men in that profession. (c)Bias of Woman: A positive value indicates a bias toward women in the given profession, while a negative value suggests an underrepresentation of women in that profession.

Based on the calculations from Eq.(3), we have plotted the average overall bias for each examined C-LLMs, as shown in Fig. 5a. The data indicate that ChatGLM performs the best among the models, with an overall bias of 0.474. Wenxinyiyan, Tongyiqianwen, and Baichuan AI follow, with overall biases of 0.524, 0.535, and 0.557, respectively. Although the latter two exhibit relatively higher levels of bias, they still perform better than the worst model, the Xinghuo Large Model. The Xinghuo Large Model shows the highest overall bias, reaching a significant level of 0.776. This could have implications for the fairness of large language models in areas such as recruitment, career planning, and education.

Figures 5b and 5c respectively illustrate the male and female occupational gender biases exhibited by different C-LLMs. Our findings reveal a clear presence of occupational stereotypes: The examined C-LLMs generally exhibit a preference for males when describing class-based occupations, male-dominated professions, and occupations with a relatively balanced gender distribution (as evidenced by positive values in Fig. 5b and negative values in Fig. 5c). This gender preference only disappears when describing occupations predominantly held by women, shifting instead to a preference for females (negative values in Fig. 5b and positive values in Fig. 5c). For instance, in the nursing profession, all models consistently demonstrate a strong preference for women. Notably, in female-dominated occupations such as modeling and flight attendants, Xinghuo, Tongyi Qianwen, and Baichuan AI still exhibit varying degrees of male preference. This observation suggests that these three models require further optimization and refinement to improve stability and mitigate biases in their generated content.

Age perspective

In the evolutionary process of careers, the close correlation between vocational choices and individual age forms a significant dimension for research. Individuals in different age groups exhibit distinct characteristics in their career choices and development cycles. This paper elaborates on the age distribution in the content generation processes of C-LLMs, aiming to uncover the underlying patterns. Table 4 presents a descriptive statistical analysis of the age distribution in the outputs of each model. After eliminating the unbiased and unknown elements from the models, we ensured that the effective data size for each C-LLMs was no less than 2,748. The study found that the average age of the content generated by the models ranges from 35 to 40 years old, coinciding with the mature stage of a career. This finding reflects the model’s emphasis on the core strength of the vocational lifecycle during content construction. It is noteworthy that Tongyiqianwen exhibits the highest standard deviation in age distribution, indicating extensive coverage and diversity in the age span of generated content. In contrast, Xinghuo, with the lowest standard deviation of 7.183, highlights a high concentration of its output content at the age level. This may be attributed to the model’s preference for data from a particular age group during the training process or the limitations of its generation strategies.

Further analysis reveals that the ChatGLM model shows significant positive skewness, favoring younger age groups, while the Baichuan AI model, with skewness near zero, maintains a balanced age distribution. This discrepancy may be due to differences in the design of the model architecture, the diversity of training datasets, and the strategies to control randomness in content generation algorithms. The variance in skewness metrics offers a unique perspective on the preference of different C-LLMs algorithms for age characteristics. Moreover, most models display negative kurtosis values, suggesting that these models tend to avoid outputs at extreme age values during content generation. This characteristic may be influenced by multiple factors, such as the design of the loss function of the model, regularization strategies, and optimization objectives. In-depth analysis of the age distribution differences among these models can facilitate further optimization, enabling the generated content to better align with the needs of specific application scenarios.

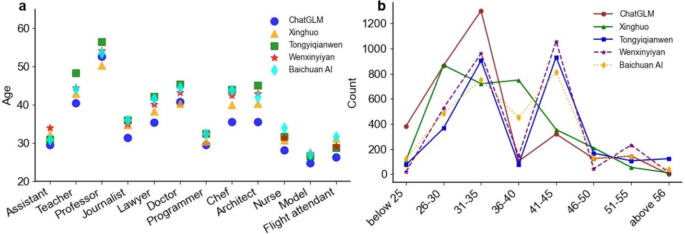

As illustrated in Fig. 6a, C-LLMs generally display a consistent trend in predicting the mean age levels for various occupations. Specifically, for occupations such as professors, teachers, and doctors, the predicted average age by the models tends to be relatively high (40-60 years old). This result can be attributed to the fact that these occupations typically require extensive professional experience and long-term accumulation of expertise. In contrast, for occupations such as models and flight attendants, the predicted average age is lower (20-30 years old), which can be explained by the preference for younger professionals in these fields.

Age bias in occupational representation by C-LLMs. (a) Age Distribution Across Occupations: The vertical axis represents the average age predicted by the model for each occupation. (b) Age Group Count: The vertical axis represents the frequency of occurrences for each age group in the model’s predictions.

Further analysis of the data presented in Fig. 6b reveals significant fluctuations in the models’ prediction results across different age groups. Notably, while the Xinghuo model peaks at the 26-30 and 36-40 age ranges, other models reach their predictive peaks within the 31-35 and 41-45 age brackets, this discrepancy may stem from differences in the training data and algorithmic processing methods used by various C-LLMs. Predictions for those under 25, between 36-40, and over 46-50 are markedly lower. This result indicates that C-LLMs tend to favor middle-aged individuals ( between 30 and 45 years old) when outputting age-related characteristics for occupations, with notably less representation for extremely young or older groups.

From a perspective of social impact, such bias can exacerbate stereotypes about young and old individuals, reduce their representation in application scenarios, and consequently affect the completeness and fairness of the model. Therefore, to enhance the models’ generalization capabilities and promote fairness in applications, it is imperative to strive for greater diversity in training datasets. This includes encompassing a wider range of occupational categories, age levels, and cultural backgrounds. Ensuring this diversity will allow the C-LLMs to more accurately reflect and serve the needs of all strata of society.

Education perspective

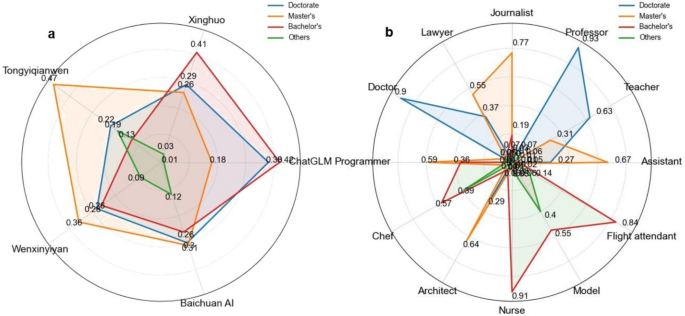

Fig. 7a illustrates the distribution of educational backgrounds in content generated by different models. The analysis reveals a general tendency across all models to produce individuals with bachelor’s degrees or higher, suggesting that these models are more likely to associate professions with higher levels of education. Specifically, ChatGLM generates the highest proportion of doctoral-level profiles, while Tongyiqianwen and Wenxinyiyan produce more individuals with master’s degrees. ChatGLM and Xinghuo lead in generating bachelor’s degree holders. In contrast, the Baichuan AI model shows a more balanced distribution across bachelor’s, master’s, and doctoral levels. These differences in the distribution of educational background among the models reflect their applicability at various educational levels, providing researchers with a basis for selecting appropriate models that better match the educational background and knowledge needs of the users.

Educational background bias in occupational representation by C-LLMs. (a) Educational Bias Across Models: This figure illustrates the educational preferences exhibited by different C-LLMs. (b) Educational Distribution Across Occupations: This figure presents the proportion of different educational levels associated with various professions in AIGC.

Furthermore, to deeply explore the distribution of educational backgrounds within professional fields, this study categorizes occupations and conducts a statistical analysis of the educational distribution in content generated by five C-LLMs, as shown in Fig. 7b. The data reveal a distinct stratification of educational levels across different professions. Doctoral degrees are mainly focused on professors and doctors; professions like journalists and architects have a high percentage of master’s degrees; bachelor’s degrees are predominant among nurses and flight attendants, while occupations such as chefs and models have a higher proportion of degrees at the bachelor’s level or below. These characteristics of educational distribution across occupations suggest that C-LLMs, to some extent, perpetuate societal stereotypes and biases regarding the educational levels of professions. In other words, professions with a higher proportion of individuals holding advanced degrees are often perceived as more advantageous or higher-tiered. This phenomenon is manifested in the output of large language models, revealing a new dimension of professional bias: the potential preference and reverence for professions associated with higher education. This analysis helps us better understand the multiple factors contributing to differences in educational distribution and provides a research pathway for evaluating the effectiveness of C-LLMs and the dynamic impact on various professions. It holds significant importance in promoting model fairness and reducing bias.

Regional perspective

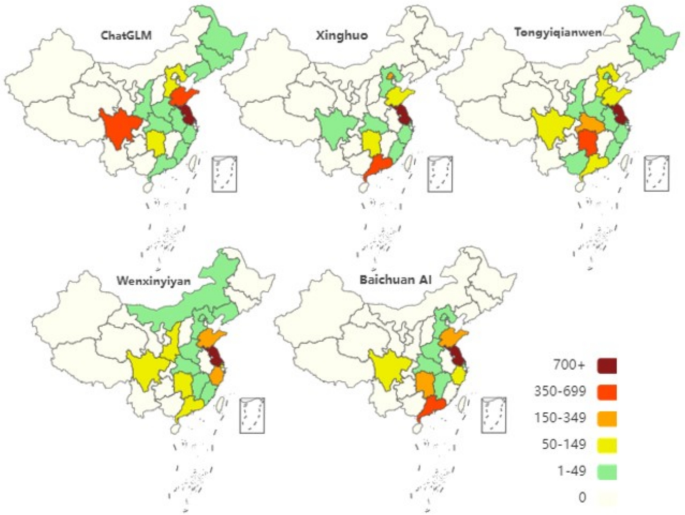

Fig. 8 illustrates the distribution characteristics of various Chinese provinces of origin involved when different C-LLMs generate introductory content. This study aims to delve into the coverage and application status of various C-LLMs within different regions of China by examining this distribution.

Regional distribution in the content generated by C-LLMs. Map lines delineate study areas and do not necessarily depict accepted national boundaries.

As observed from Fig. 8, the five C-LLMs exhibit significant regional heterogeneity in terms of application and data distribution across Chinese regions. Firstly, concerning the breadth of regional coverage, the Xinghuo model has the narrowest scope, covering only 11 regions. In contrast, the Tongyiqianwen model has the most extensive regional coverage, reaching up to 17 regions. This comparison suggests that the Xinghuo model requires further optimization in terms of its generalization capability. Secondly, regarding the depth of regional coverage, the performance of each model varies. Specifically, all models exhibit the highest data density in Jiangsu Province, with Sichuan, Guangdong, Shandong, and Hunan provinces also demonstrating relatively high values in some models. This indicates that these provinces are the primary areas for the application or data sourcing of the models. Furthermore, moderate values are observed in provinces such as Zhejiang, Liaoning, Hubei, and Hebei, suggesting that although these provinces have a certain degree of representativeness in the application of the models, their influence remains somewhat limited compared to regions with higher values. Notably, provinces in the west, south and northeast, such as Xinjiang, Qinghai, and Yunnan, exhibit low data density or even a complete absence of data in most models. This observation directly reflects the inadequacies in the data collection and application coverage in these regions, highlighting a significant bias in the regional coverage of the models.

In total, Fig. 8 not only provides a visual representation of the uneven geographical coverage and application of the five C-LLMs, but also deeply reveals the potential regional bias issues that may exist in their development and application. If such biases are not addressed, they can lead to the neglect of specific regional cultures, linguistic characteristics, and user needs, thereby affecting the overall performance and universality of the models. Therefore, future developers of C-LLMs should prioritize the diversity and balance of data sources. By optimizing data collection strategies and algorithm design, they should strive to reduce and eliminate regional biases, with the aim of constructing a more comprehensive, fair, and efficient intelligent language processing system.

Bias mechanism analysis

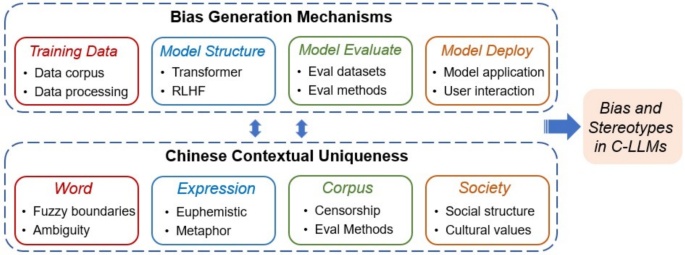

The biases and stereotypes regarding gender, age, educational background, and regional characteristics exhibited by C-LLMs in generating personal profiles are not incidental errors. Instead, they are systemic results arising from interconnected influences throughout the model lifecycle. From training data collection and model training mechanisms to evaluation designs and deployment interactions, decisions made at every stage may either introduce or amplify biases. Furthermore, the distinctive features of the Chinese language and its sociocultural environment further exacerbate the complexity and subtlety of these biases. As illustrated in Fig. 9, this section will thoroughly examine the mechanisms by which biases are produced in C-LLMs, emphasizing four critical stages within the specific context of the Chinese language.

Analytical framework for bias and stereotype generation mechanisms in C-LLMs. This figure illustrates the interaction between four critical components-training data, model architecture, model evaluation, and model deployment-and the unique characteristics of the Chinese context, which collectively lead to bias and stereotype generation in C-LLMs.

Bias introduction at the training data stage

Training data forms the foundational layer of knowledge representation in LLMs, and its quality and representativeness play a decisive role in shaping the fairness and diversity of model outputs. In the case of C-LLMs, the pre-training corpora often exhibit pronounced structural biases38. Existing studies indicate that approximately 65-80% of the pre-training data for C-LLMs come from high-traffic platforms such as social media, news outlets, and digital publications. These sources disproportionately reflect the linguistic styles and sociocultural ideologies of urban, young, and highly educated users, while significantly underrepresenting marginalized voices, minority dialects, and cross-cultural expressions39. This imbalance leads C-LLMs to overfit mainstream narratives, reducing their ability to generalize across diverse demographic and cultural contexts.

Moreover, widespread societal biases embedded in the source data, such as rigid gender norms, regional stereotypes, and occupational hierarchies, are easily internalized by the model if not explicitly mitigated. For example, in training corpora, terms like “engineer” and “leader” frequently co-occur with male pronouns, whereas roles such as “nurse” or “secretary” often align with female references40. Furthermore, data collection practices also amplify these structural biases. In pursuit of scale and efficiency, developers often prioritize high-engagement platforms, which inherently filter for popular discourses and trending topics. As a result, marginalized narratives are not only under-collected but may also be systematically removed during preprocessing. Human annotators or content moderation algorithms frequently exclude politically sensitive or non-mainstream content, forming the so-called “information silence zones”41. This selective representation in training data becomes the root cause of bias propagation in downstream outputs.

Bias amplification in model training mechanisms

C-LLMs are primarily trained to minimize prediction errors, a process that inherently biases the learning dynamics toward dominant linguistic patterns and high-frequency co-occurrences. This optimization objective causes models to preferentially internalize mainstream expressions while neglecting rare or marginalized language features42. The Transformer architecture, particularly its attention mechanism, further reinforces these tendencies by amplifying associations between frequently co-occurring tokens. For instance, the CORGI-PM corpus reveals that occupational terms like “programmer” are predominantly associated with male pronouns, reflecting societal stereotypes embedded in training data43. Similarly, Zhao et al. demonstrate that large language models tend to associate technical professions with male identities, further perpetuating gendered assumptions44. These associations are not neutral byproducts of statistical modeling, but rather reflections of deeply embedded societal norms present in the training data.

Moreover, the dynamics of model optimization exacerbate these initial biases. An internal technical report on ERNIE 3.0 reveals that the gradient variability of gender-related parameters dropped by 73% during the early training stages, indicating rapid convergence on socially dominant associations45. This suggests that models tend to lock in biased correlations early in the training process due to their optimization trajectory. Without regularization mechanisms or counter-bias signals, these early-formed associations become effectively “frozen” within the model. In addition, instruction fine-tuning and RLHF processes introduce further subjectivity through human feedback46. When feedback data lack demographic diversity or are filtered through unbalanced annotator perspectives, it risks reinforcing the very stereotypes the model was meant to avoid. Therefore, both the architecture design and optimization procedure of C-LLMs play pivotal roles in amplifying initial corpus biases, transforming subtle data imbalances into persistent output patterns.

Bias concealment in the evaluation stage

The evaluation stage is intended to ensure quality control and fairness checks during the development of C-LLMs. However, current evaluation practices often fail to uncover embedded structural biases due to limitations in both assessment metrics and dataset construction. Mainstream metrics such as accuracy, BLEU score, and perplexity focus on aggregate semantic performance but are insufficient to detect disparities across demographic subgroups47. Consequently, they may mask performance disparities among different social groups. Recent empirical studies reinforce these concerns. For example, the C-EVAL benchmark shows that only 0.7% of its test cases involve minority languages, and 99.2% of samples exclusively use standardized Mandarin48. This severe lack of linguistic and cultural diversity constrains the benchmark’s ability to evaluate fairness in non-mainstream contexts. More importantly, traditional metrics struggle to detect discriminatory outputs from models. The recently proposed BEATS bias evaluation framework reveals that conventional single-metric indicators, such as accuracy, exhibit significant blind spots in detecting discriminatory outputs from large language models, often obscuring biased behaviors toward specific demographic groups49.

Additionally, automated evaluation tools exhibit limited effectiveness. These tools struggle to capture implicit meaning, metaphorical usage, and polysemous constructs, especially in Chinese, where contextual cues are subtle and grammar is non-explicit. Koo et al. introduced the Cognitive Bias Benchmark for LLMs as Evaluators (CoBBLEr), and found that around 40% of model judgments showed explicit biases. Meanwhile, the Rank-Biased Overlap (RBO) between model outputs and human bias assessments was only 49.6%, revealing significant inconsistency50. This suggests that LLMs, even when used in evaluation, may reproduce the very biases they aim to detect, leading to a recursive bias loop within the evaluation stage. Furthermore, human evaluators may also unconsciously introduce subjective biases due to their homogeneous backgrounds or experiential tendencies. Prior studies show that crowd annotators tend to assign biased ratings based on personal experience, especially when dealing with socially sensitive tasks51. In summary, existing evaluation systems contain substantial bias concealment across metric design, corpus composition, evaluation tool efficacy, and evaluator subjectivity. Consequently, models that perform well under standardized evaluations may still pose considerable risks of generating unfair outcomes in real-world scenarios.

Bias reproduction in deployment and interaction

The deployment of C-LLMs in real-world environments establishes a feedback loop through which social biases may be amplified and reinforced52,53. Increasingly, C-LLMs are applied in socially sensitive domains such as education, recruitment, healthcare, and legal services. In the absence of robust bias mitigation strategies, these models may influence users’ perceptions and decisions in subtle but consequential ways. Empirical studies have shown that LLMs trained on biased datasets tend to reproduce occupational stereotypes. A recent audit of model-generated job descriptions found that technical roles were assigned male pronouns over 85% of the time, whereas caregiving and nursing roles were disproportionately associated with female pronouns54. When embedded into algorithmic decision-making systems, such biased outputs risk normalizing discriminatory assumptions in hiring practices and career guidance applications.

Moreover, The interactive nature of C-LLMs compounds these risks. These models are highly responsive to user prompts and adept at adapting to linguistic nuances, which enhances usability but also increases vulnerability to prompt-induced bias. When users submit biased or leading queries, models frequently affirm the underlying assumptions without critique, thereby fostering a “bias echo chamber” over repeated interactions. Prompt-based experiments confirm this phenomenon, showing that gender-biased questions significantly elevate stereotypical completion rates across mainstream LLMs55. An additional concern is the lack of transparency and explainability in most deployed C-LLMs. Operating as black-box systems, these models offer limited interpretability for users and developers, making it difficult to trace, understand, or correct biased outputs. Research on AI explainability has shown that low system transparency diminishes user trust and impedes timely detection of harmful outputs at the point of use56. In sum, the combination of high adaptability, low explainability, and wide deployment in socially impactful contexts makes C-LLMs vulnerable to reinforcing harmful social biases in practice. Without systemic safeguards, deployment itself becomes a vector of bias reproduction.

Bias reinforcement in the Chinese linguistic context

The unique linguistic structure and sociocultural characteristics of the Chinese language create fertile ground for the reinforcement of biases in C-LLMs. This reinforcement arises primarily from linguistic ambiguity, nuanced expression styles, selective silencing in corpus construction, and distinctive cultural contexts. Firstly, unlike Indo-European languages, Chinese lacks explicit grammatical markers for gender, number, or tense, making semantic disambiguation highly context-dependent. As a result, when identity attributes such as profession or social status are involved, models often resolve ambiguity using learned statistical associations-frequently defaulting to dominant norms. For example, Chaturvedi et al. found that LLMs associate male-related terms (e.g., “ability to work under pressure”) in job postings with a higher probability of recommending male candidates, while linking female-related terms (e.g., “detail-oriented and patient”) to a higher likelihood of recommending female candidates54. Consequently, even in the absence of explicit gender indicators, these models internalize and perpetuate entrenched gender stereotypes in occupational contexts. Secondly, Chinese discourse is characterized by high-context communication, where indirectness, euphemism, and metaphor are common rhetorical strategies. These features complicate bias detection and mitigation. Expressions such as “leftover woman” or “strong woman” may appear neutral on the surface but encode deeply entrenched gender hierarchies and social expectations. Most C-LLMs lack sufficient sociolinguistic grounding to distinguish between literal and connotative meaning, thus replicating these implicit biases in output generation57.

Moreover, Bias reinforcement is further compounded by selective silence in corpus construction. Empirical audits of Chinese web corpora have revealed substantial underrepresentation of minority discourses, including content related to gender equality, LGBTQ+ rights, and regional identities48. Such omissions not only skew the training distribution but also reinforce dominant ideological narratives. Lastly, the corpus implicitly embodies societal structures and cultural norms, including traditional gender roles, educational elitism, and regional hierarchies, subtly reinforcing these biases within models. Repeated exposure to such structural biases leads models to adopt them as linguistic norms, translating societal inequalities into algorithmic outputs. Therefore, bias reinforcement in C-LLMs is not merely a technical artifact but a sociolinguistic consequence of modeling a high-context, ideologically stratified language without appropriate cultural calibration.

link