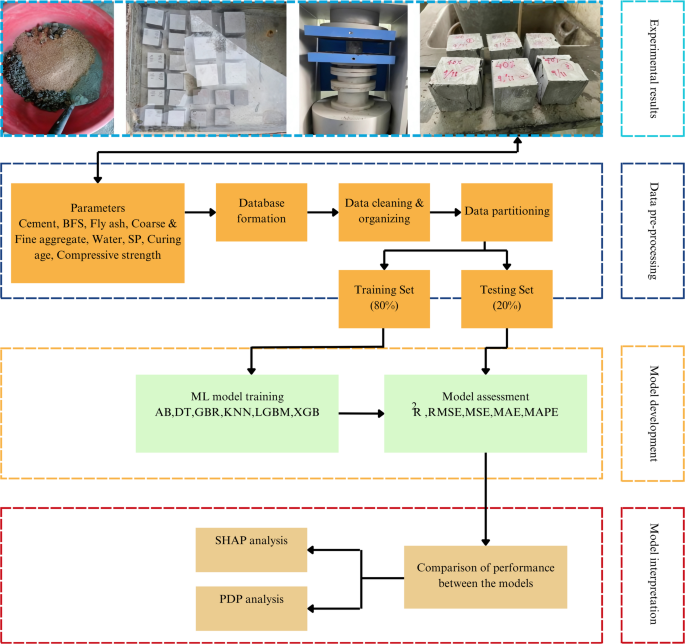

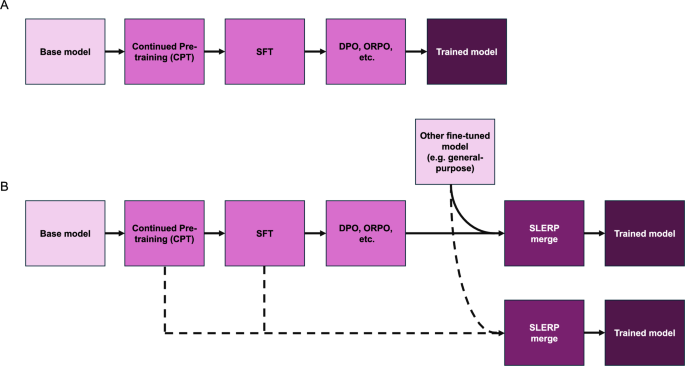

We follow the process depicted in Fig. 2 in developing models and conducting assessments. Figure 2A shows a conventional linear training pipeline where a base model undergoes Continued Pre-Training (CPT), followed by Supervised Fine-Tuning (SFT), and then optimized using methods like Direct Preference Optimization (DPO) or Odds Ratio Preference Optimization (ORPO) to produce a trained model. Figure 2B shows an alternative pipeline where, after CPT, SFT, and optimization (e.g., DPO, ORPO), the model is further enhanced by merging it with another fine-tuned model (e.g., a general-purpose model). We note that model merging can be done with models extracted from various training stages, such as after CPT, SFT or at the final stage. The implementation process is structured sequentially, with each step building upon the previous one to enhance the model’s capabilities in stages. As summarized in Table 1, CPT exposes the model to domain-relevant data, while SFT delivers task-specific instruction using labeled datasets in formats such as question-answer or instruction-response. Preference optimization methods (e.g., DPO or ORPO) align the model with human or baseline preferences established in prior steps. Finally, model merging integrates the strengths of different training paths, leading to improved generalization and, in many cases, the emergence of new capabilities absent from individual models. This structured progression ensures a balance between task-specific accuracy and user preference alignment by addressing knowledge acquisition and preference tuning at distinct stages.

A A conventional training pipeline where a base model undergoes Continued Pre-Training (CPT), followed by Supervised Fine-Tuning (SFT), and then optimized using methods like Direct Preference Optimization (DPO) or Odds Ratio Preference Optimization (ORPO) to produce a trained model. Assessment of the model can be performed at each of the stages, such as using the SFT results for benchmarking. B An alternative pipeline where, after CPT, SFT, and optimization (e.g., DPO, ORPO), the model is further enhanced by merging it with another fine-tuned model (e.g., a general-purpose model). Merging can be done with models extracted from various training stages, such as after CPT, SFT or at the final stage.

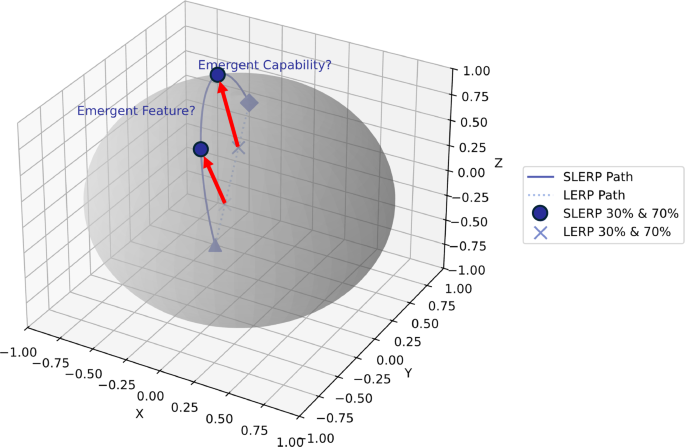

For the purpose of the analysis, we go into the details of model merging strategies. In this work, we focus on Spherical Linear Interpolation (SLERP, details see “Materials and methods” section), as we found it to be the most effective method. SLERP is a mathematical technique originally introduced in the field of computer graphics for smoothly interpolating between rotations represented by quaternions30. SLERP has found widespread application in various domains that require smooth transitions between orientations or states, including robotics, physics simulations, as well as real-time graphics. For instance, in robotics, SLERP is used for the practical parameterization of rotations, allowing for seamless motion planning and control31. In physics simulations and computer graphics, SLERP is crucial for visualizing and animating rotations in a way that preserves the continuity and smoothness of motion32,33. By maintaining the geometric relationships between interpolated states, SLERP ensures that transitions are both smooth and physically meaningful, making it a useful tool in scenarios where precise and continuous interpolation is required. Figure 3 shows the basic concepts of SLERP (versus linear interpolation, LERP), visually. A key aspect of this strategy is that the smooth, nonlinear path helps to preserve the underlying structure of the model parameters. The sphere in this context represents the inherent structure of the model’s parameter space, and by maintaining the geometric relationship between the parameters, SLERP ensures that the interpolation respects this original structure and does not puncture it (as linear combination of points would), leading to a more meaningful and coherent blending of capabilities rather than random, unstructured changes. Because the merged points are both congruent with the model geometry (that is, they lie on the sphere used here for demonstration) and because they realize new points previously not accessed, emergent features and capabilities could potentially be unlocked. The smoothness and spherical symmetry assumed in SLERP help preserve angular relationships between parameters, avoiding high-loss regions typically encountered in linear interpolation. This capability is especially beneficial for materials science applications, where parameter space discontinuities and asymmetries are common. SLERP enables better generalization and the development of emergent capabilities, making it a powerful tool in this domain.

SLERP interpolates between points p1 and p2 along a spherical path on the surface of the sphere, calculated as SLERP(t), where t is the interpolation parameter (equations see main text). In contrast, LERP interpolates linearly between the same two points, following a straight line through the sphere. Intermediate points at 30% and 70% along both paths are highlighted, showing the difference in how SLERP and LERP handle interpolation. In the context of LLMs, SLERP is particularly effective for merging model parameters from different pre-trained models, facilitating the emergence of new abilities that neither parent model possessed alone. The smooth, nonlinear path of SLERP helps to preserve the underlying structure of the model parameters, represented by the unit sphere, potentially unlocking novel interactions between features that lead to enhanced performance and the development of emergent capabilities. The sphere in this context represents the inherent structure of the model’s parameter space, and by maintaining the geometric relationship between the parameters, SLERP ensures that the interpolation respects this original structure and does not puncture it (as the LERP points would), leading to meaningful and coherent blending of capabilities rather than random, unstructured changes. A key point is that because the merged points are both congruent with the model geometry (that is, they lie on the sphere used here for demonstration) and because they realize new points previously not accessed, emergent features and capabilities could potentially be unlocked.

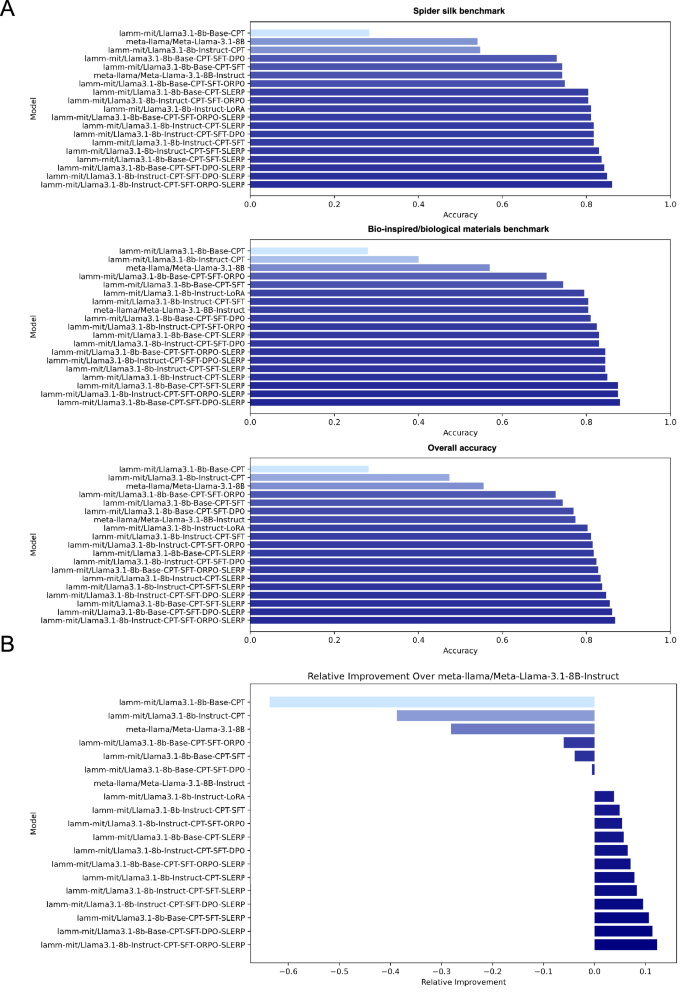

In the following, we present a series of results from assessment experiments conducted with different model families and training/merging strategies (details on training, models, datasets, and assessment benchmarks, see “Materials and methods” section). Figure 4 depicts a series of performance evaluations of Llama-3.1 Model variants across benchmarks. We use two basic models as the foundation for our training. First, meta-llama/Meta-Llama-3.1-8B, the base model of the Llama family that has not been fine-tuned and aligned. Second, the meta-llama/Meta-Llama-3.1-8B-Instruct model that has been fine-tuned and aligned to conduct question-answer interactions, along with a host of other capabilities6. Except for the LoRA case14, all of our experiments include CPT (see Table 1 for an overview of the training stages and acronyms used) as the first step, with the aim to endow the base model with domain knowledge from our materials science corpus of papers and distilled, extracted and processed data sourced from scientific studies. We then implement a range of variations, such as CPT only, CPT-SFT, CPT-SFT-ORPO and CPT-SFT-DPO. At each stage, we also implement model merging with the meta-llama/Meta-Llama-3.1-8B-Instruct model. Overall, the results reveal that the models that have undergone SLERP merging (especially those combined with DPO and ORPO strategies) generally show the highest accuracy across benchmarks. The best strategy without model merging is found to be the Instruct-CPT-SFT-DPO strategy.

A Accuracy results for various variants on different benchmarks: Spider Silk, Bio-inspired/Biological Materials, and Overall Accuracy. The models were evaluated after undergoing different training and optimization strategies (Continued Pre-Training (CPT), Supervised Fine-Tuning (SFT), Direct Preference Optimization (DPO)/Odds Ratio Preference Optimization (ORPO), and model merging). B Relative improvement of model variants over the meta-llama/Meta-Llama-3.1-8B-Instruct baseline model. This highlights how each training strategy contributes to the model’s performance gains or losses across the various benchmarks, providing insight into the effectiveness of different approaches. It is notable that models that underwent CPT, SFT, and to some extent preference optimization (e.g., DPO, ORPO) show a deterioration in performance, as indicated by negative relative improvement values. However, after applying the Spherical Linear Interpolation (SLERP) merging technique, these same models exhibit significant performance gains, surpassing the baseline model. This highlights the effectiveness of model merging in combining the strengths of different specialized models, resulting in a robust final model with superior overall performance. Overall, the results show that the models that have undergone SLERP merging (especially those combined with DPO and ORPO strategies) generally show the highest accuracy across benchmarks. Merging in this case is always done with meta-llama/Meta-Llama-3.1-8B-Instruct. All models have been trained with the same datasets in all stages, as shown in Table 6.

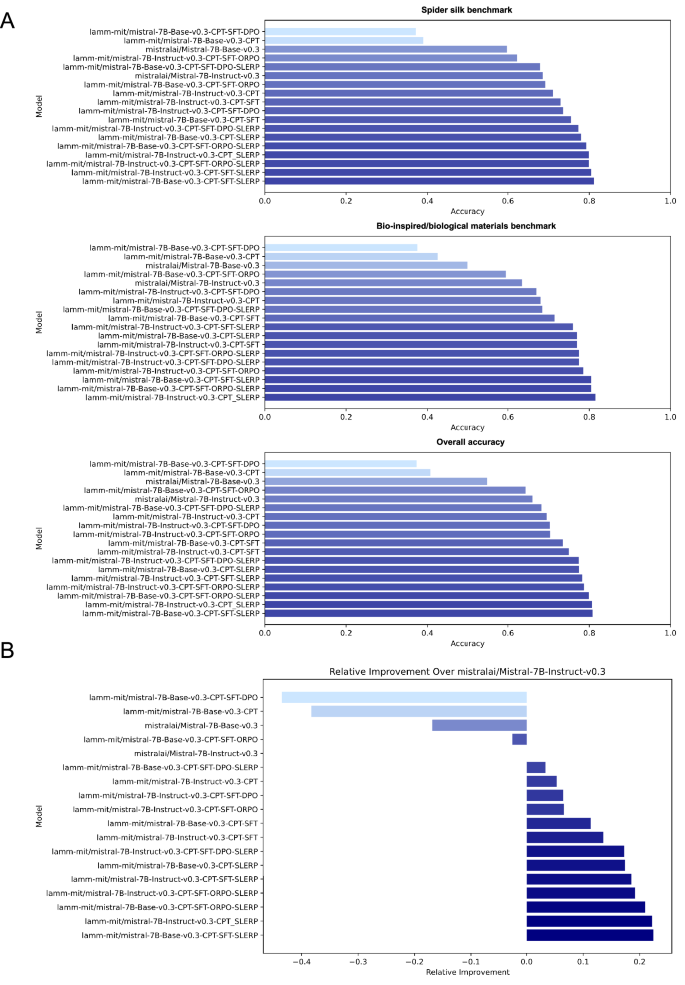

We now conduct the same series of experiments using Mistral-v0.3 model variants4 across benchmarks. As in the previous set of results, we use the same dataset across all cases, and we present both non-merged cases and merges with the mistralai/Mistral-7B-Instruct-v0.3 model. Figure 5 depicts an overview of the performance evaluations across benchmarks for this case. As before, the results show that these models that have also undergone SLERP merging generally show the highest accuracy across benchmarks. The best strategy without model merging is found to be the Base-CPT-SFT strategy, albeit the performance of the Instruct-CPT-SFT strategy is very similar.

A Accuracy results for various Mistral-7B-v0.3 model variants on the Spider Silk, Bio-inspired/Biological Materials, and Overall Accuracy benchmarks. Initial models trained with Continued Pre-Training (CPT) and Supervised Fine-Tuning (SFT) show moderate performance. Models further optimized using Odds Ratio Preference Optimization (ORPO) or Direct Preference Optimization (DPO) exhibit significant improvements in accuracy across all benchmarks. Model merging results in further significant improvements. The relative improvements are even more pronounced than those seen in the Llama-3.1 models (here exceeding 20% versus around 12%), indicating the particular effectiveness of these techniques for the Mistral series. B Relative improvement of model variants over the baseline mistralai/Mistral-7B-Instruct-v0.3 model. The Base model subjected to CPT alone initially shows a decrease in relative performance. However, after SFT, ORPO and especially after applying Spherical Linear Interpolation (SLERP) merging, especially with ORPO or DPO optimization, these models demonstrate substantial positive relative improvement, surpassing the baseline by a greater margin than the improvements seen in the Llama-3.1 models. This highlights the powerful impact of these combined strategies in enhancing the overall performance of the Mistral models. It is notable that a direct merge of the Base-CPT-SFT model results in significant performance, close to the Instruct-CPT-SFT strategy. Merging is always done with mistralai/Mistral-7B-Instruct-v0.3. The same training set is used for all experiments, as defined in Table 6.

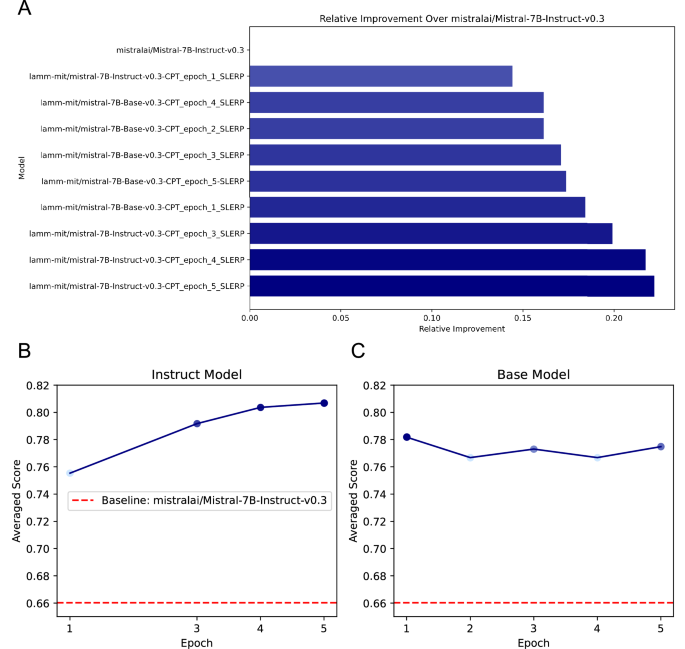

The CPT stage involves five epochs. To explore the effect of the number of epochs in this phase, we computed the performance of the direct CPT-SLERP merges for the Mistral models from different training epochs. It is noted that the original merges assessed (and SFT, DPO/ORPO training stages) in Fig. 5 were conducted based on CPT results from epoch 5. Figure 6 depicts a comparison of averaged scores across different epochs for both the Base and Instruct models, using the SLERP method. Figure 6A shows an overview of the results, in a similar format as the earlier performance assessments, depicting performance across all models and variants of CPT epochs used. Figure 6B shows the performance of the Instruct model as a function of the number of CPT training epochs, and Fig. 6C illustrates the performance of the Base model. We can see that the Instruct model demonstrates a consistent improvement in performance with each epoch, peaking at the best score by epoch 5, indicating that it benefits significantly from continued training. In contrast, the Base model shows a more fluctuating performance, with its highest score at epoch 1, followed by slight declines and only a minor recovery at epoch 5. This suggests that while the Base model starts strong, it does not consistently improve with additional training, potentially indicating a saturation point. Both models, however, consistently outperform the baseline score set by the original mistralai/Mistral-7B-Instruct-v0.3 model, underscoring the effectiveness of the SLERP method, and consistent with the earlier results. The more substantial improvement of the Instruct model over the baseline highlights its robustness in instruction-tuned tasks, making it the preferable choice for such applications, particularly when extended fine-tuning is feasible.

The baseline score for the mistralai/Mistral-7B-Instruct-v0.3 model is indicated by the red dashed line in both subplots. A shows an overview of the results, in similar format as the earlier performance assessments (Figs. 4 and 5), showing performance across all models and variants of Continued Pre-Training (CPT) epochs used. B shows the performance of the Instruct model over five training epochs, while C subplot illustrates the performance of the Base model over five epochs.

Detailed analysis of key factors in model merging

As the results in Figs. 4 and 5 clearly reveal, SLERP appears to significantly improve model performance due to its ability to respect the geometric properties of the parameter space. However, this analysis did not yet reveal whether we have a significant synergistic effect. To examine this, we plot the results differently, comparing the actual measured performance with an expected performance that is computed by simply averaging the scores of the two parent models. To properly define all key variables, the performance of a merged model is defined as \({P}_{\text{merged};{P}_{1},{P}_{2}}\) (measured per the benchmark), while the expected, averaged score E(P1, P2) is calculated as the linear average of the performances of the two parent models:

$$E({P}_{1},{P}_{2})=\frac{{P}_{1}+{P}_{2}}{2}.$$

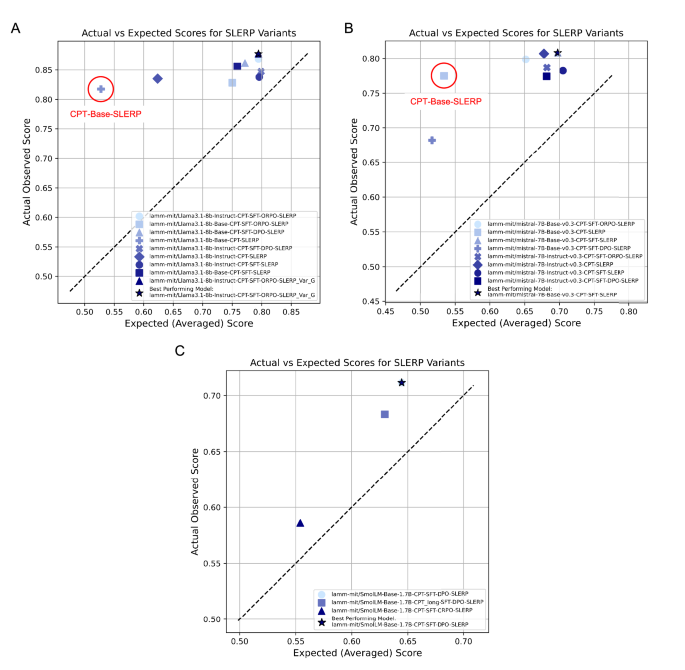

Using these metrics, Fig. 7 shows a detailed exploration of performance of SLERP variants for different cases, plotting the actual observed performance over an estimated, expected score based on a simple average of the score of both parent models (linear combination).

The deviation from the diagonal shows clear nonlinear, synergistic effects, where the actual observed model performance is much greater than a simple averaging of the capabilities of the parent models alone. Results are shown for both the Llama-3.1 (A) and Mistral-7B-v0.3 (B) model series, respectively. C shows results for the much smaller SmolLM family of models, where the deviation of the observed score from the expected score is not as significant, and even below the best performance of one of the pre-merged models (see Fig. 13 for a detailed analysis). Results for the Llama and Mistral models are similar across all experiments and show a clear nonlinear effect of model merging. As marked with a red circle, ∘, the CPT-Base-SLERP strategy tends to yield some of the highest deviation from the expected score and is, at the same time, a relatively straightforward training strategy.

Notably, the strong deviation from the diagonal reveals nonlinear, synergistic effects, where the actual observed model performance is much greater than a simple averaging of the capabilities of the parent models alone. Results are shown for both the Llama-3.1-8B and Mistral-7B-v0.3 model series, respectively, for a variety of training strategies and datasets used in the process. We find that the results are similar for both models. An important distinction that can be seen in the analysis is that for the Llama models, the best-performing model (lamm-mit/Llama3.1-8b-Instruct-CPT-ORPO-SLERP) is based off the Llama Instruct model, whereas for the Mistral model (lamm-mit/mistral-7B-Base-v0.3-CPT-SFT-SLERP) it is based off the Mistral Base model.

To better understand the mechanics behind the observed effects, we briefly discuss the mathematical underpinnings of SLERP merging. Unlike linear interpolation, which assumes a flat Euclidean space, SLERP explores a richer parameter space by interpolating along a curved path on a unit sphere (we refer also to Fig. 3). This approach allows SLERP to uncover regions in the parameter space that might represent combinations of parameters more effective than those found in either model alone. SLERP further balances the specialized knowledge learned by each model, combining their strengths without simply averaging them. By avoiding high-loss regions that linear interpolation might pass through, SLERP ensures a smoother transition, potentially leading to better generalization in the merged model. The nonlinear nature of SLERP’s path also considers the complex interactions between parameters, which can reveal beneficial interactions that a simple linear combination would miss. Furthermore, SLERP may act as a form of regularization, preventing overfitting to the idiosyncrasies of a single model’s training data, thus enhancing generalization. Finally, SLERP helps mitigate the effects of catastrophic forgetting, preserving knowledge from both models when one has been fine-tuned or trained after the other. These factors combine to make SLERP a powerful tool for model merging, leading to a merged model that often performs better than either of the original models on their own.

Hence, we believe that the observed effectiveness of SLERP in merging models can be attributed to its ability to enhance nonlinear interactions between parameters by exploring the spherical geometry of the parameter space. Given two sets of model parameters θ1 and θ2, each parameter can be seen as a vector in a high-dimensional space. The interpolation performed by SLERP respects the curvature of this space, allowing for combinations of parameters that are not simply linear but involve deeper, nonlinear synergies (see Fig. 3). Consider the parameters θ1 and θ2 as consisting of individual components θ1,i and θ2,i in a given layer of the neural network. SLERP combines these parameters as follows:

$${\theta }_{i,\text{merged}}=\parallel {\theta }_{1}{\parallel }^{1-t}\parallel {\theta }_{2}{\parallel }^{t}\left(\frac{\sin ((1-t)\omega )}{\sin (\omega )}{\hat{\theta }}_{1,i}+\frac{\sin (t\omega )}{\sin (\omega )}{\hat{\theta }}_{2,i}\right)$$

This combination allows for interactions between θ1,i and θ2,i that are nonlinear in nature. For example, if θ1,i and θ2,i represent weights connected to different features in the network, their spherical combination could activate a new feature ϕi that is not present in either model individually:

$${\phi }_{i}=f\left({\theta }_{i,\text{merged}}\cdot {x}_{i}\right)$$

where xi is the input feature and f(⋅) is the activation function. The nonlinear combination of parameters may lead to new behaviors or capabilities, as the interpolated parameters could synergistically enhance or suppress features in ways that the individual models cannot.

SLERP avoids destructive interference by maintaining the angular relationships between the parameter vectors, which can prevent the loss of specialized features learned by either model. The spherical symmetry imposed by SLERP introduces a regularization effect, smoothing the transition between the models and enabling the merged model to generalize better. This process often results in the emergence of new capabilities or improvements in performance that neither of the original models possessed.

The ability of SLERP to uncover these new capabilities can also be understood through the lens of overparameterization and the principles of ensemble methods. Overparameterized neural networks are known to generalize well, even when trained to zero error, due to their increased capacity to capture complex patterns34. SLERP leverages this capacity by combining parameters in a nonlinear fashion, effectively utilizing the high-dimensional space in which these parameters reside. As a result, the merged model can exhibit emergent properties that are not apparent in either of the original models. SLERP’s mechanism resembles ensemble methods, where combining diverse models leads to better generalization35. In this case, the diversity comes from the different training histories and learned features of the two models. The spherical interpolation pathway created by SLERP acts as a continuum of model ensembles, where at each point along the path, the combined parameters may activate new and beneficial feature interactions. SLERP not only preserves the strengths of the individual models but also has the potential to generate entirely new capabilities through its sophisticated interpolation method. This makes it a useful tool for our goal to merge models that complement each other or to create a more versatile and generalizable model from existing pre-trained models.

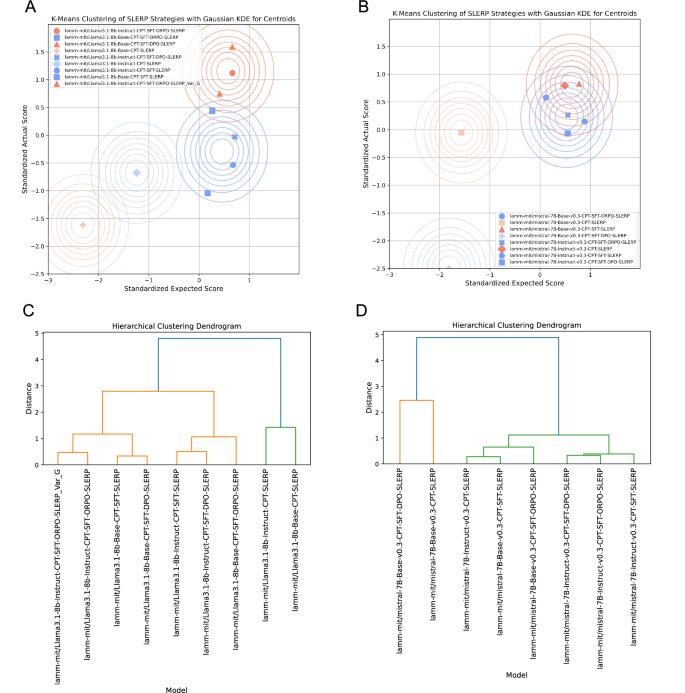

We examine the variations and potential trends in the strategies explored using clustering analysis. Figure 8 provides a comprehensive clustering analysis of SLERP strategies applied to both the Llama-3.1-8b and Mistral-7B-v0.3 models, and the resulting impact on their performance. We explore the use of two methods. First, K-Means clustering, a partition-based method that groups data into a predefined number of clusters by minimizing the distance between data points and the cluster centroids, providing insight into the natural groupings of models based on their expected and actual performance. Second, we use hierarchical clustering, an agglomerative method that creates a tree-like structure, a dendrogram, to show the nested relationships between models at various levels of similarity, revealing the hierarchical organization and potential sub-groupings within the data.

A K-Means clustering of Llama-3.1-8b models using standardized expected and actual scores, with Gaussian KDE (Kernel Density Estimation) applied to visualize the centroids. The clustering reveals distinct groupings based on the performance outcomes of various SLERP strategies. B K-Means clustering of Mistral-7B-v0.3 models using the same approach as in (A). Similar to the Llama models, distinct clusters emerge, highlighting the different performance profiles of the models post-SLERP merging. A Hierarchical clustering dendrogram for the Llama-3.1-8b models based on the clustering analysis. B Hierarchical clustering dendrogram for the Mistral-7B-v0.3 models. The dendrograms in (C and D), respectively, reveal how different models cluster together, indicating that these strategies yield similar performance outcomes. The comparison between the Llama-3.1-8b (C) and Mistral-7B-v0.3 dendrograms (D) shows that while both models respond well to SLERP strategies, the Mistral models exhibit more distinct clustering patterns.

Figure 8 A illustrates the K-Means clustering of the Llama-3.1-8b models using standardized expected and actual scores, with Gaussian KDE (Kernel Density Estimation) applied to visualize the centroids. The analysis reveals distinct groupings that correspond to different SLERP strategies, indicating that specific merging techniques produce closely related performance outcomes. Figure 8B presents a similar K-Means clustering for the Mistral-7B-v0.3 models. Here too, distinct clusters emerge, showing that the SLERP strategies significantly influence the models’ performance profiles. Notably, the clustering patterns observed in the Mistral models are more pronounced compared to the Llama models, suggesting that the Mistral architecture might be more sensitive to these optimization and merging strategies.

Across both the Llama and Mistral models, the K-Means analysis clearly delineates two performance-based clusters. Models that incorporate multiple fine-tuning strategies, especially ORPO, consistently form clusters with higher actual scores, outperforming models that rely on simpler strategies. This suggests that the complexity and thoroughness of the fine-tuning process play a crucial role in achieving better model performance, as indicated by the clustering results. Next we explore hierarchical clustering as a way to better break down these distinctions.

To do this, we use a dendrogram analysis. A dendrogram is a tree-like diagram that displays the arrangement of clusters generated through hierarchical clustering. This visualization helps elucidate the relationships among the models, with closely related models (in terms of performance) clustering together. The dendrogram reveals that models employing similar training strategies are grouped into distinct subclusters, highlighting the effectiveness of these approaches in shaping model performance. Figure 8C introduces the hierarchical clustering dendrogram for the Llama-3.1-8b models, and Fig. 8D for the Mistral-7b models. The dendrogram demonstrates how different models cluster together, indicating similar performance outcomes. When comparing the dendrograms of the Llama and Mistral models, it becomes evident that while both models are positively influenced by SLERP strategies, the Mistral models show more defined clustering patterns. This suggests a stronger impact of the various strategies on the Mistral architecture.

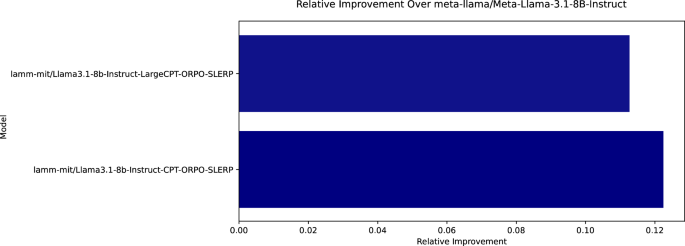

Figure 9 shows the effect of using a larger CPT dataset using the extended dataset of 8000 papers, but with more varied format including more defected text, when training the Llama series. As can be seen, performance decreases, underscoring the effect of higher quality, clean data for positive training outcomes. As mentioned earlier, the extended dataset was constructed using a mix of PDF2Text and Nougat OCR36; we found these methods to yield more variable text quality. While, for instance, Nougat can successfully render equations in Markup format, it also leads to a relatively frequent occurrence of unknown symbols, page breaks, repeated characters, and other defects. These methods did not cause issues when the data was further processed into question-answer pairs or summaries, rational explanations, and so on, but there is an apparent negative effect on CPT as the data is provided in raw format.

As can be seen, performance decreases, underscoring the effect of higher quality, clean data for positive training outcomes. Future experiments could be done to discern these effects more clearly, especially focusing on the effect of various training data compositions.

Likewise, a similar test case with the extended dataset was conducted with the Mistral series of models. The variant trained on the original integrated dataset achieved the best overall benchmark of 0.81, whereas the variant trained on the extended dataset achieved 0.80. These results suggest that future experiments could be conducted to assess the effect of this particular dataset variation on that model architecture’s performance. We leave this to future work; noting that the overall effects of model merging and the use of Base vs. Instruct models as basis are stable, with differences, however, in which exact strategy yields the best results: For the Llama and Mistral models, it was Instruct-CPT-SFT-ORPO-SLERP and Instruct-CPT-ORPO-SLERP. These observations are further complicated by the effect of prompting, which can skew results one way or the other. An overarching theme, however, is that consistently, SLERP merging yields super performance. For a straightforward and computationally effective way to implement a fine-tuning strategy, the procedure Instruct-CPT-SLERP is probably the best overall choice. While it does not yield the best performance for all scenarios, it generally yields strong performance. The differences show that nuanced benchmarking and prompt engineering can be critical.

Mechanistic analysis to elucidate key steps with highest impact on performance

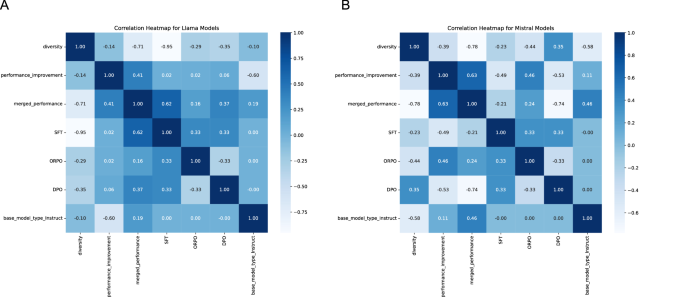

As a next step in the analysis, we focus on correlation heatmaps to illustrate the relationships between various model attributes and the performance of merged models. As shown in Fig. 10, the performance of a merged model is denoted as Pmerged, while the performance of the two parent models is denoted as P1 and P2. Performance improvement is defined as the difference between the performance of the merged model and the maximum performance of the two parent models:

$${\rm{Performance}}\, {\rm{Improvement}}={P}_{{\rm{merged}}}-\max ({P}_{1},{P}_{2})$$

Diversity between parent models is measured as the absolute difference between their individual performances:

$${\text{Diversity}}\,=| {P}_{1}-{P}_{2}|$$

The attributes considered include the diversity between parent models, the performance improvement relative to the parent models, Supervised Fine-Tuning (SFT), Direct Preference Optimization (DPO)/Odds Ratio Preference Optimization (ORPO), and whether the model is based on the Base or Instruct architecture. The correlation coefficients range from −1 to 1, with positive values indicating a direct relationship between the attribute and the merged model performance, and negative values indicating an inverse relationship. A Llama Models: SFT shows the strongest positive correlation with merged performance, suggesting that models incorporating SFT tend to achieve better results after merging. Conversely, diversity between parent models has a strong negative correlation with merged performance, implying that greater differences between parent models are associated with lower merged performance. ORPO and the Instruct architecture exhibit moderate positive correlations with merged performance, indicating that these factors also contribute positively, though less significantly than SFT. B Mistral Models: In the Mistral models, performance improvement shows a robust positive correlation with merged performance, particularly in instruction-tuned models, which also show a strong positive correlation between the base model type and merged performance. Diversity, however, exhibits a negative correlation with merged performance, similar to the Llama models, though the effect is less pronounced. ORPO demonstrates a moderate positive correlation with performance improvement, suggesting that this optimization method contributes to enhanced performance in Mistral models, albeit not as strongly as SFT in Llama models. The findings suggest that instruction-tuned base models and merging strategies play a crucial role in optimizing Mistral model performance, while diversity and SFT influence these outcomes differently in Llama models.

To elucidate overall trends that can be gleaned from the results, Fig. 10 depicts correlation heatmaps for the fine-tuned Llama and Mistral models. The data reveals distinct relationships between various metrics. In Llama models, a strong negative correlation between diversity and SFT suggests that higher diversity reduces reliance on supervised fine-tuning, whereas performance improvement shows moderate positive correlations with both merged performance and SFT, indicating that these factors contribute to improved outcomes. In contrast, Mistral models exhibit a more robust positive correlation between performance improvement and merged performance, especially in instruction-tuned models, where the Base model type significantly enhances merged performance. ORPO, while contributing to performance improvements in both models, has a more pronounced impact in Mistral models. Overall, the findings suggest that diversity tends to reduce SFT dependency, particularly in Llama models, while instruction-tuned Base models in Mistral benefit more from merging strategies, emphasizing the importance of model selection and optimization methods.

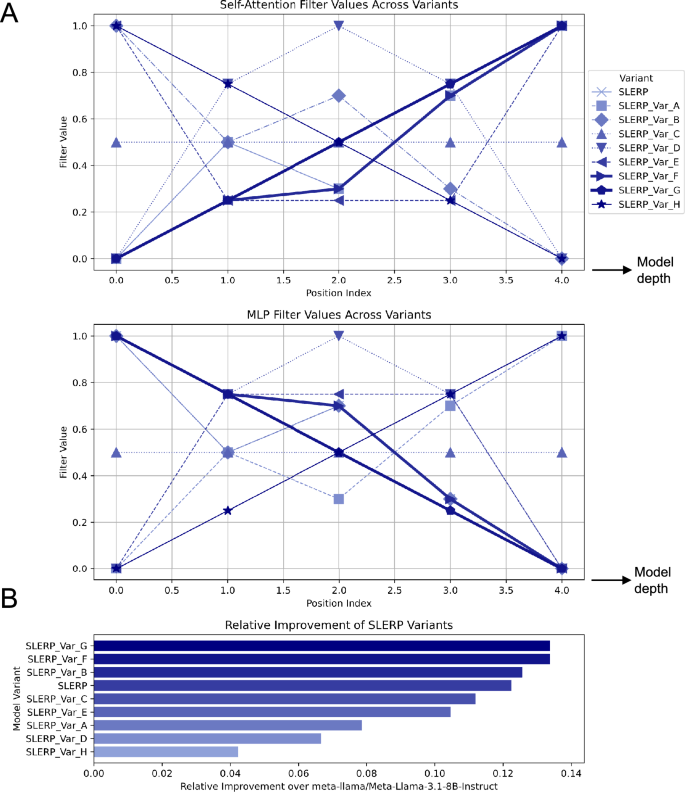

In model merging, there are several parameter choices, including the relative density of the parameters that are preserved across the layers of the LLMs being merged. This is exemplified in Fig. 3 for the two points merged at 30% vs. 70% along their SLERP paths. In Fig. 11 we conduct a systematic analysis on variants of the original SLERP merge used in the earlier examples, with a range of alternative options, for the best-performing strategy in the case of the Llama-3.1 Model variants (CPT-SFT-ORPO-SLERP). As depicted visually, we vary the self-attention filter values distinctly from the multilayer perceptron (MLP) values (Fig. 11A). Different weighting schemes are employed, starting from the reference case that was chosen based on earlier work29. Resulting performance measures are summarized in Fig. 11B, showing that Variants G and F show the best performance (where G is a simple linear progression across the depth of the LLM) (detailed performance assessments for other variants are shown in Fig. 11).

The analysis shown here focuses on variants of the original Spherical Linear Interpolation (SLERP) merge used in the earlier examples, for the best-performing strategy in the case of the Llama-3.1 Model variants(CPT-SFT-ORPO, then merge). As depicted visually, we vary the self-attention filter values distinctly from the multilayer perceptron (MLP) values (A). Different weighting schemes are employed, starting from the reference case that was chosen based on earlier work29. Resulting performance measures are summarized in (B), showing that Variants G and F show the best performance (where G is a simple linear progression).

Contrasting assessments with very small LLMs

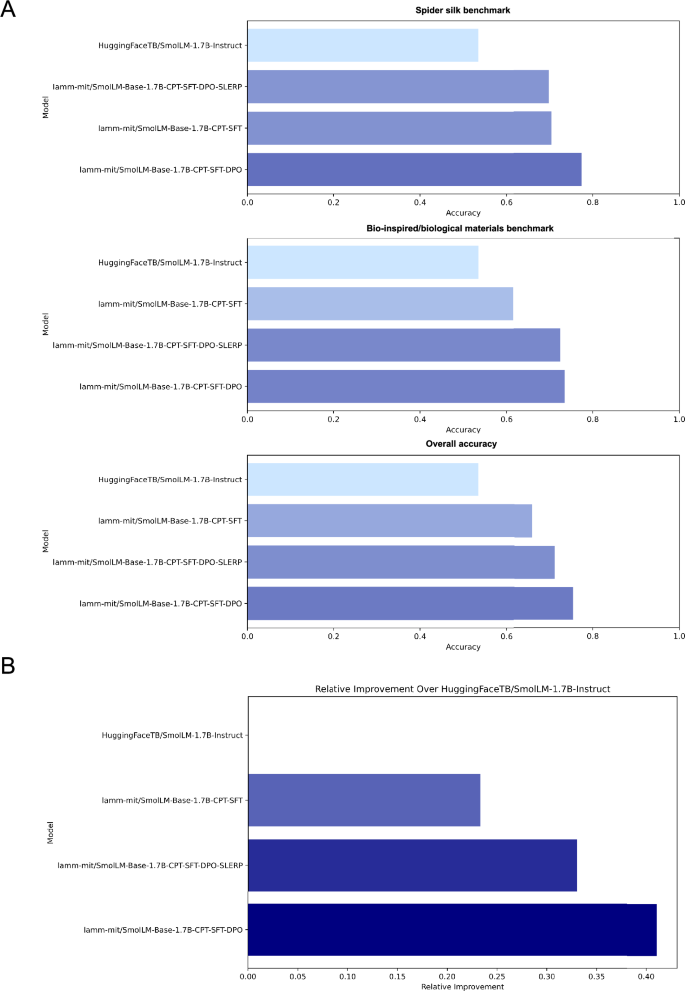

While the models studied earlier were modest in size, around 7–8 billion parameters, recent research has resulted in even smaller, yet useful, models that can be particularly useful for edge computing applications, or deployment on devices such as mobile phones or robotic systems. We now examine whether such models also show the marked effects observed earlier due to model merging. We conduct this analysis using the SmolLM model series, specifically the 1.7 billion parameter model. This choice is partially motivated by the complete open access of the model, training strategy, and training data. As in the earlier analyses, we start with the base model and successively apply CPT, SFT and DPO (we found that for this small model, DPO worked better than ORPO). Though never reaching the level of absolute performance of fine-tuned 7B or 8B models, in almost all fine-tuning cases with SmolLM, we find the most significant performance increases relative to its original model with the CPT-SFT-DPO version of SmolLM being the top performing variant.

As depicted in Fig. 12 while we observe a significant emergence of new capabilities when applying SLERP to large-scale language models in the 7B and 8B parameter ranges, these emergent behaviors were absent in smaller models, such as those with 1.7B parameters. This may suggest a threshold effect where SLERP’s potential to unlock novel abilities is contingent on model size. Smaller models might lack the same level of complexity as larger 7B–8B models that have notably richer high-dimensional parameter spaces and capabilities, especially for reasoning and knowledge recall. These findings underscore the importance of model scale in the manifestation of emergent properties and provide critical insights into the interplay between interpolation techniques and model complexity. Our results contribute to the broader understanding of scaling laws in neural networks, highlighting the conditions under which advanced capabilities may be realized. A summary of the observed performance over the expected, averaged performance of the base model is shown in Fig. 7C.

A Accuracy results for various SmolLM-1.7B model variants on the Spider Silk, Bio-inspired/Biological Materials, and Overall Accuracy benchmarks. The HuggingFaceTB/SmolLM-1.7B-Instruct model serves as the baseline, albeit training is done solely on the Base model (HuggingFaceTB/SmolLM-1.7B). While applying Continued Pre-Training (CPT) and Supervised Fine-Tuning (SFT) strategies improves performance, the addition of Direct Preference Optimization (DPO) yields further accuracy gains. However, unlike in the much larger Llama or Mistral models, here, Spherical Linear Interpolation (SLERP) merging does not yield the best performance overall. This is more clearly shown in (B), where we plot the relative improvement over the baseline model (HuggingFaceTB/SmolLM-1.7B-Instruct) across the benchmarks. Notably SLERP combined with DPO yields a slight reduction in performance over the CPT-SFT-DPO case, in stark contrast to the earlier results for Llama and Mistral. The emergent behaviors triggered by SLERP in larger models are not observed here, indicating a potential threshold effect. This suggests that SLERP’s ability to unlock novel capabilities may depend on the scale of the model, with smaller models like the 1.7B parameter SmolLM failing to exhibit these emergent properties. These findings underscore the critical role of model scale in realizing advanced capabilities, contributing to the broader understanding of scaling laws in neural networks. Note, in (B), the top bar is zero since this model is used as the reference to compute model improvement. It is kept in the visualization for consistency with the earlier plots (where some variants yielded performance degradation, that is, negative values).

Further quantification of the effects of model merging across all model architectures

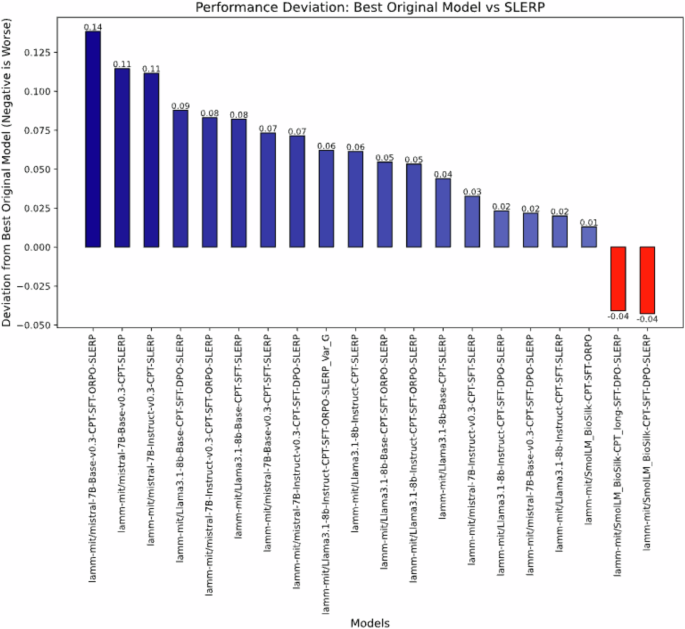

To better understand whether or not and to what degree model merging improves performance over either one of the two models used for merging, we present the analysis shown in Fig. 13. The plot shows performance deviation of SLERP merged models compared to the best original model used as source.

The plot illustrates the deviation in performance between models merged using SLERP and their best-performing original counterparts (either without SLERP or with instruction tuning). The deviation is calculated as the difference between the best original model’s performance and the SLERP model’s performance. Negative deviations, where SLERP underperforms relative to the best original model, are highlighted in red. Positive deviations, indicating better performance of the SLERP model, are shown in shades of blue, with darker blue representing greater improvements. Models are ordered from most significant improvement to most significant underperformance.

The results reveal that the deviation in performance between models merged using SLERP and their best-performing original counterparts, whereby the deviation is calculated as the difference between the best original model’s performance and the SLERP model’s performance. Hence, negative deviations, where SLERP underperforms relative to the best original model, are marked in red. Positive deviations, indicating better performance of the SLERP model, are shown in shades of blue, with darker blue representing greater improvements.

Interactive examples for open-ended cross-material reasoning and material design tasks

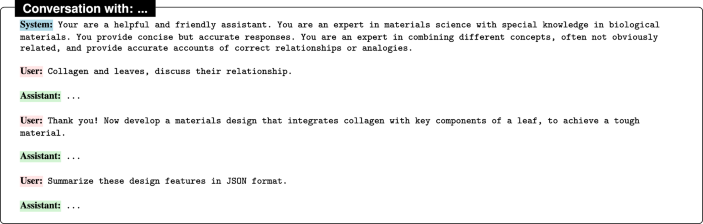

In our next experiment, we conduct interactive conversations with a set of the models, using consistent system prompts and identical user input. We aim to test multi-turn capabilities of the models, assess responsiveness to system prompts and instructions, and the capability to produce structured output (JSON). We will further assess the quality of synthesis of each model, along a set of criteria that include depth of reasoning, creativity, clarity, and whether or not quantitative predictions are featured. Each of the conversations unfolds as follows:

Figures 14–18 present the results of conversations of a human user with a selection of five models (best-performing models, and DPO trained models).

lamm-mit/Llama3.1-8b-Instruct-CPT-SFT-DPO.

lamm-mit/Llama3.1-8b-Instruct-CPT-SFT-ORPO-SLERP-Var_G.

lamm-mit/mistral-7B-v0.3-Base-CPT-SFT-DPO.

lamm-mit/mistral-7B-v0.3-Base-CPT-SFT-SLERP.

lamm-mit/SmolLM-Base-1.7B-CPT-SFT-DPO.

All five models demonstrate a strong ability to connect seemingly unrelated concepts, such as collagen and leaves, and to propose innovative materials designs. The output features rich Markup formatting (note, the raw source is shown here). The models show a consistent understanding of the biological and materials science concepts involved, suggesting that their fine-tuning on domain-specific content has been effective. The differences in the responses mainly pertain to the depth of explanation, the creativity of the proposed designs, and the clarity of the output in both natural language and structured formats like JSON.

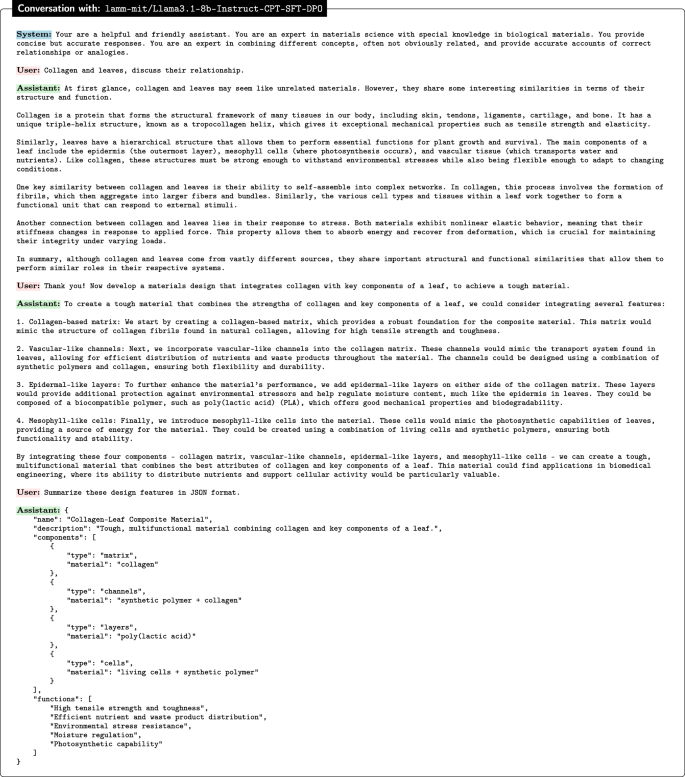

As shown in Fig. 14, the model lamm-mit/Llama3.1-8b-Instruct-CPT-SFT-DPO provides a well-organized and detailed discussion, drawing clear parallels between the structure and function of collagen and leaves. The proposed material design is robust, incorporating key components like a collagen-based matrix, vascular-like channels, and mesophyll-like cells. The response is notable for its comprehensive breakdown of each component’s role, leading to a thorough and scientifically grounded design. The JSON summary is precise, reflecting the structure of the proposed design effectively.

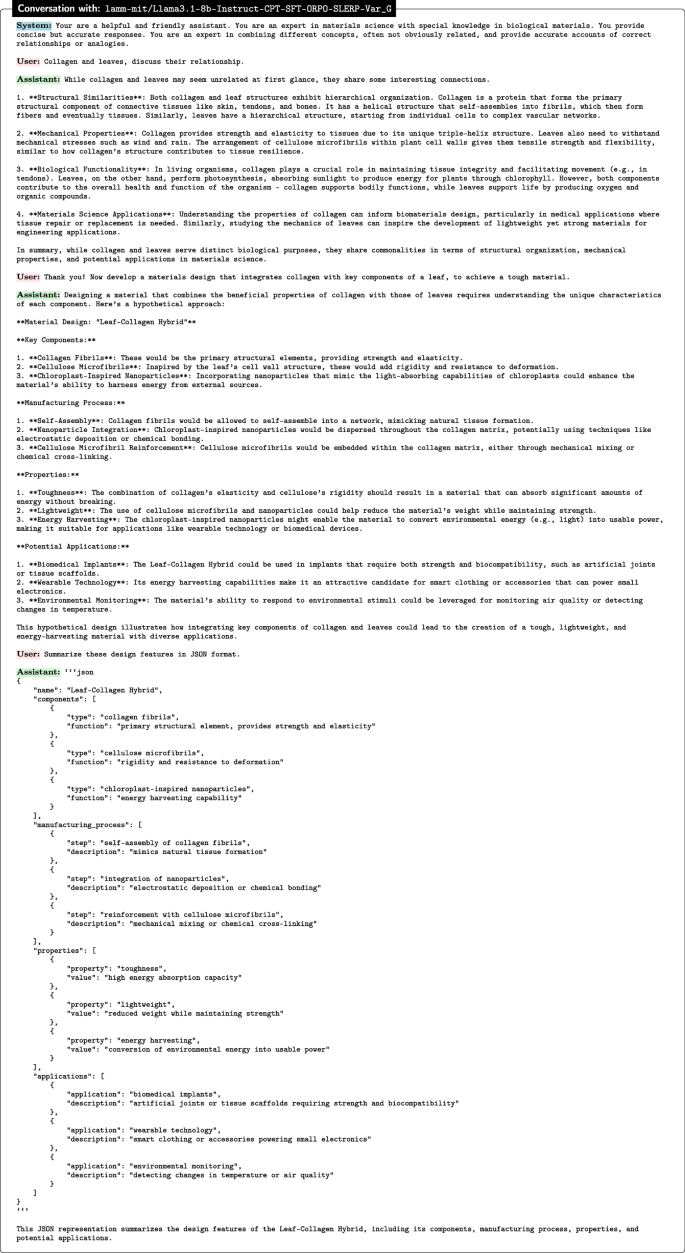

Figure 15 shows results for the lamm-mit/Llama3.1-8b-Instruct-CPT-SFT-ORPO-SLERP-Var_G model. This model delivers a more concise but also insightful analysis of collagen and leaves. The material design focuses on integrating collagen fibrils with cellulose microfibrils and chloroplast-inspired nanoparticles. This resembles an inventive approach to enhancing the material’s properties. This model excels in identifying the potential applications of the designed material, showcasing a broader vision for its use. The JSON representation is clear and well-structured, effectively summarizing the design features.

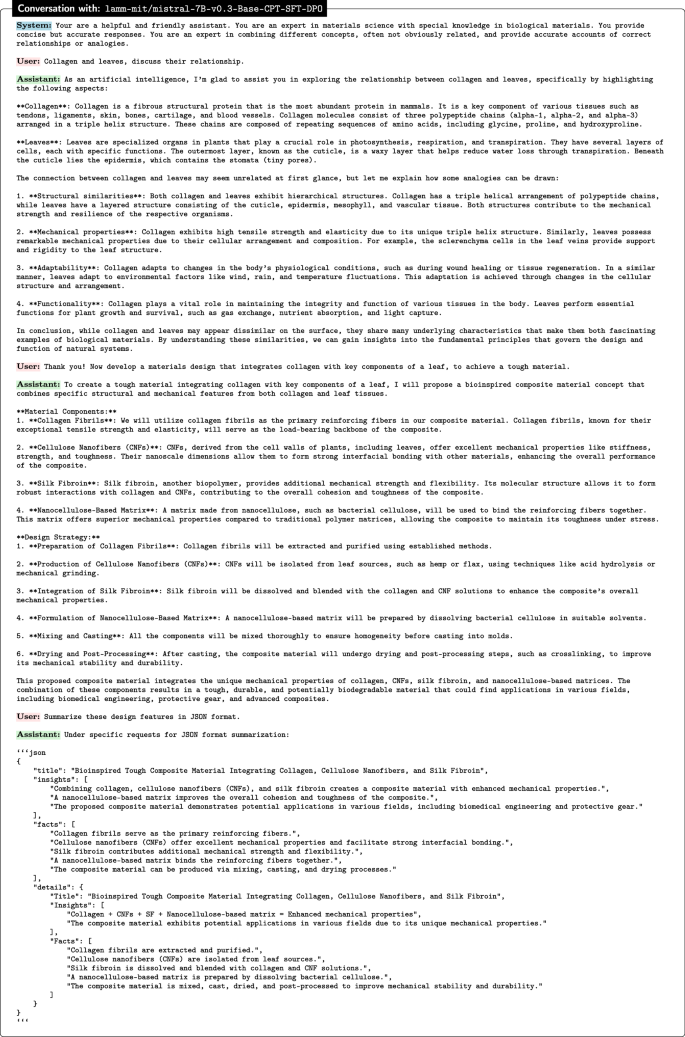

Figure 16 captures results of the lamm-mit/mistral-7B-v0.3-Base-CPT-SFT-DPO model. The responses are found to be comprehensive, with a strong focus on the mechanical properties of collagen and leaves. The proposed material design is detailed, incorporating collagen fibrils, cellulose nanofibers, silk fibroin, and a nanocellulose-based matrix. This model particularly stands out for its emphasis on the integration of these components to enhance the material’s toughness and durability. The JSON summary is thorough, capturing the complexity of the design and its potential applications.

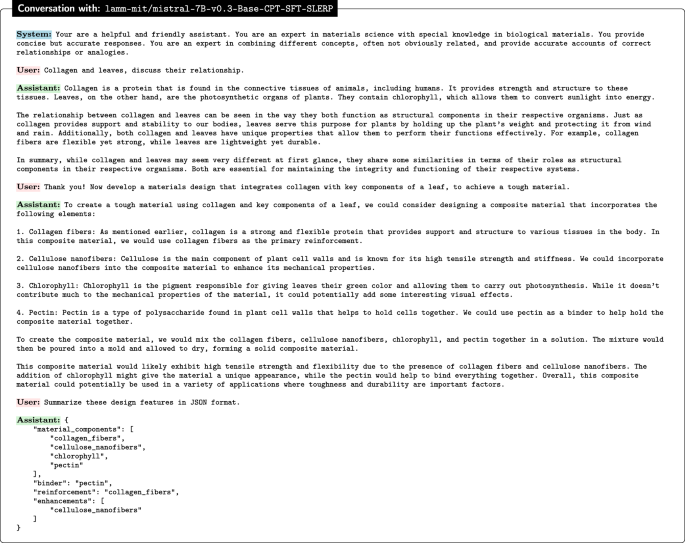

Next, Fig. 17 shows results for the lamm-mit/mistral-7B-v0.3-Base-CPT-SFT-SLERP model. This result illustrates a more straightforward and less detailed analysis compared to the others. While the connection between collagen and leaves is adequately explained, the material design is simpler, focusing on collagen fibers, cellulose nanofibers, chlorophyll, and pectin. This response is notable for its clarity and simplicity, making it accessible but perhaps lacking the depth seen in other models. The JSON summary is basic but effective in conveying the key elements of the design.

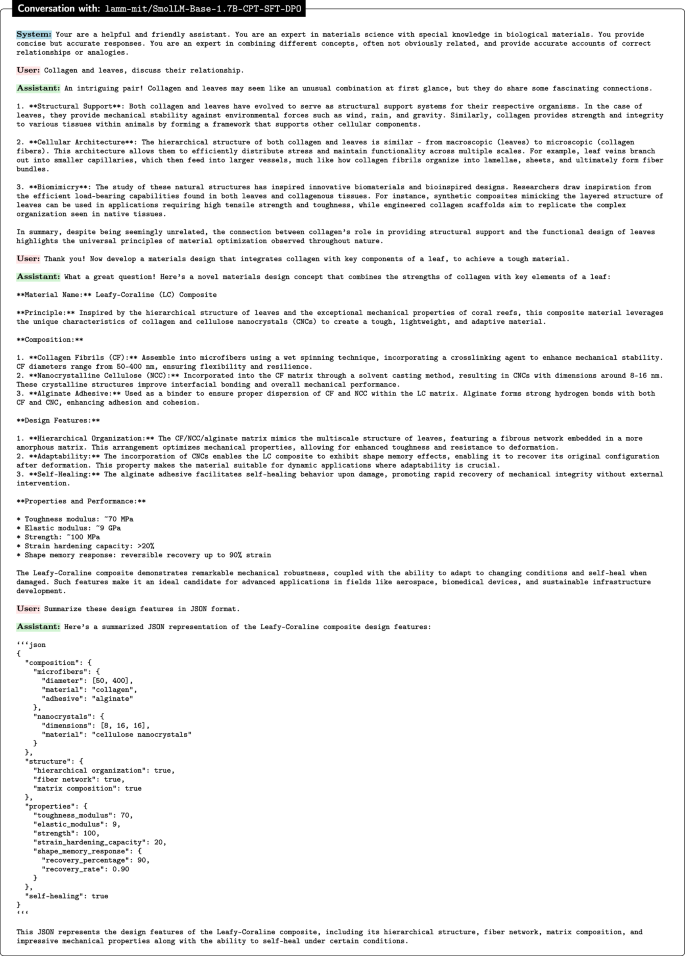

Finally, Fig. 18 showcases the results of the smallest model in this study, lamm-mit/SmolLM-Base-1.7B-CPT-SFT-DPO. The model offers an inventive, creative, and highly detailed response, integrating a broad range of components such as collagen fibrils, nanocrystalline cellulose, and an alginate adhesive, resulting in a material creatively referred to as “Leafy-Coraline (LC) Composite”. This model excels in proposing a novel composite material with self-healing and shape-memory properties, reflecting a relatively high level of creativity and technical understanding. The JSON summary is comprehensive, capturing the innovative aspects of the design and its potential applications effectively. In spite of its size, this model provides excellent responses.

A summary of key observations is shown in Table 2. We can see that each model demonstrates strengths in different areas, from detailed explanations and innovative designs to clear and concise JSON summaries. The variations in depth, creativity, and technical detail among the models highlight the diversity of approaches and the potential for each to be suited to different types of tasks or applications. Overall, these models provide a strong foundation for further exploration and development in the field of bio-inspired materials design. A detailed breakdown of the analysis, generated using GPT-4o by analyzing the raw text of the conversations, is shown in Table 3, showing the results of an analysis conducted by sharing the raw text with GPT-4o and prompting the model to identify criteria and assess the different versions. We emphasize that GPT-4o is used as a supporting evaluator, not the definitive authority. LLM-based evaluation minimizes subjectivity and ensures reproducibility across tasks and models compared with human assessment. The potential of LLMs as evaluators has been explored in refs. 37,38 and evaluated in refs. 39,40,41, and GPT-4o is supported by studies beyond OpenAI’s GPT-report42.

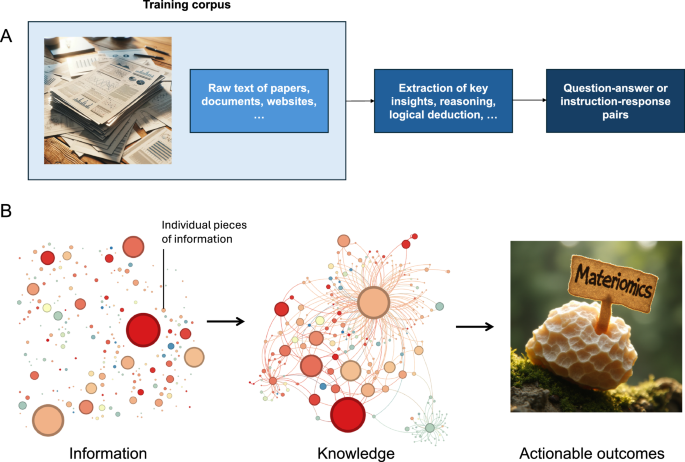

Agentic use in image generation: applications in cross-domain knowledge integration for materials and urban design

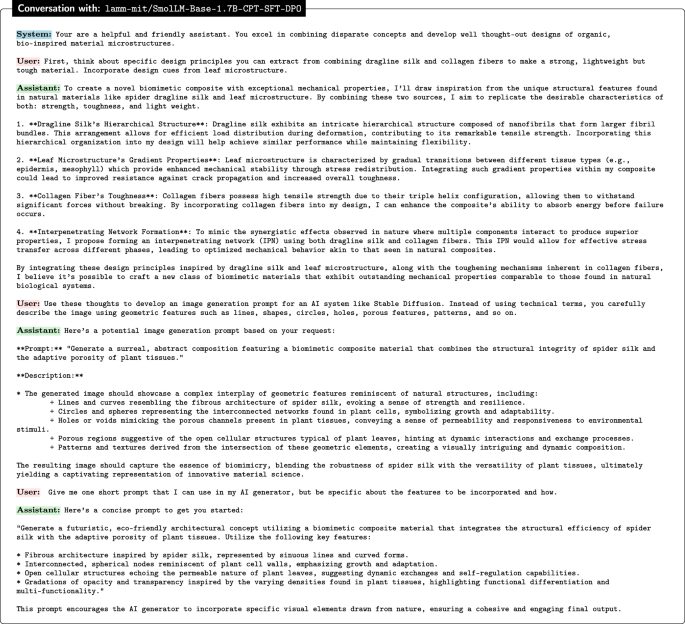

We show several examples to highlight the capabilities and potential of fine-tuned LLMs, showcasing a particular application in materials design. The overall goal is to explore how the LLMs developed here can be used to reason over complex materials principles and use the insights developed through multi-step prompting to create a prompt for image generation. We note that our fine-tuned SmolLM based model performs extremely well for this task and yields quite creative prompts that integrate various ideas and concepts (underscoring its potential as a creative agent), and the visual representation of conceptual designs guided by the prompts are generated via a custom version of a FLUX.1 [dev] model43 fine-tuned using a image dataset of leaf microstructures. The prompt generation demonstrates the LLM’s capability to conceptualize new materials-inspired design principles, which have the potential to be synthesized into physical structures. Specifically, the innovative designs are guided by the decomposed principles generated through prompt generation, which are directly relevant to materials science. Besides, LLM-generated prompts, new design possibilities can be explored that may not have been intuitive through traditional design processes. This approach bridges the gap between conceptual design and practical material development, and shows how AI can assist in design informatics by accelerating concept exploration and identifying viable design features.

While slight variations of prompting are used to yield different examples (each result presented here includes a detailed presentation of all features), the general goal is to think about design principles that we can extract from combining different biological materials. For instance, we ask the model to think about ways to combine design elements from spider silk and collagen to make a strong, lightweight but tough material, and to also incorporate design cues from leaf microstructures. The approach can be used to focus directly on material microstructures but can also be used to yield cross-domain results, such as architectural ideas or city design. We have provided several design examples that span a range of applications, from structural to urban design, to illustrate the versatility and potential of bio-inspired design principles. The examples showcase how models trained on biomimetic materials can conceptualize structural motifs commonly observed in hierarchical composites and biomaterial-inspired structures. Furthermore, by presenting visual representations of bio-inspired designs, we demonstrate the capability of the LLM to bridge conceptual design with potential real-world synthesis in the field of materials science.

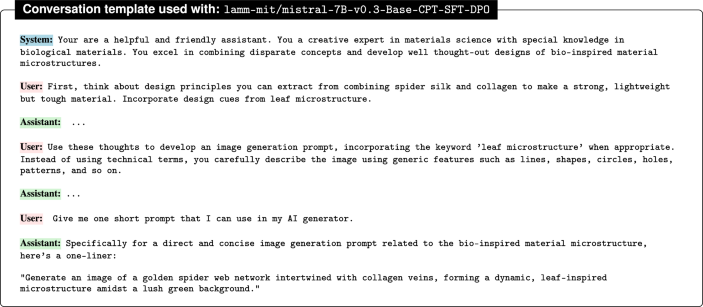

In our first example, we prompt the lamm-mit/SmolLM-Base-1.7B-CPT-SFT-DPO model as follows (Fig. 19 shows the entire conversation):

lamm-mit/SmolLM-Base-1.7B-CPT-SFT-DPO, aimed to develop an image generation prompt. The interactions include several stages, such as ideation and principle identification, the creation of the image generation prompt, and the solicitation of a short version of the prompt.

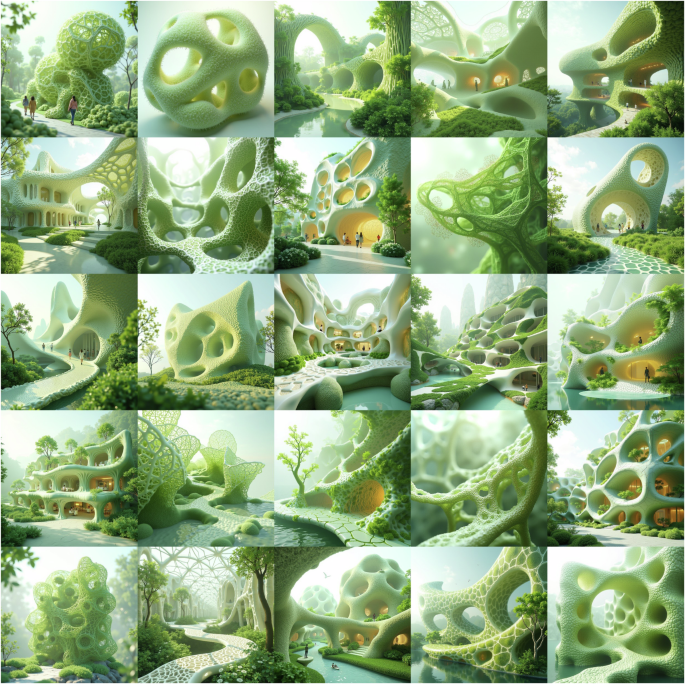

A collection of images produced in this way is shown in Fig. 20. The resulting images resemble a visionary architectural concept where biomorphic structures seamlessly blend with nature, creating a futuristic and sustainable environment. The designs are inspired by natural forms such as honeycombs, coral, and cellular structures, characterized by fluid, curving lines and intricate lattice-like frameworks that evoke the organic world. These structures are integrated with greenery, emphasizing harmony with the environment and suggesting the use of innovative, eco-friendly materials. The open, flowing spaces with large archways and natural light emphasize a connection with the outdoors, creating a sense of tranquility and well-being. As can be seen in some of the images, human figures within these spaces highlight the livability and community-centric design, suggesting a vision where technology and nature coexist harmoniously. The images present a forward-looking approach to architecture, where sustainability, esthetics, and advanced techniques converge to create a new paradigm for living and public spaces. A close inspection of the resulting designs further suggests a clear emergence of the leaf microstructure patterns, a result of the prompt and the fine-tuned ability of the generative model to incorporate this particular design idea.

Generate a futuristic, eco-friendly architectural concept utilizing a biomimetic composite material that integrates the structural efficiency of spider silk with the adaptive porosity of plant tissues. Utilize the following key features: * Fibrous architecture inspired by spider silk, represented by sinuous lines and curved forms. * Interconnected, spherical nodes reminiscent of plant cell walls, emphasizing growth and adaptation. * Open cellular structures echoing the permeable nature of plant leaves, suggesting dynamic exchanges and self-regulation capabilities. * Gradations of opacity and transparency inspired by the varying densities found in plant tissues, highlighting functional differentiation and multi-functionality. The images illustrate an architectural vision that draws inspiration from biomorphic forms including leaf microstructures, integrating sustainable design principles with natural elements, creating open, flowing spaces that emphasize harmony between advanced architectural techniques and the organic world.

These design ideas have the potential to be implemented, with specific functionality emphasized. Real-life examples of bio-inspired architectural designs include “The Hive”, a building on the NUT campus designed by Heatherwick Studio. Its honeycomb structure mirrors the cellular organization of hives, providing a modified modular hexagonal form that improves structural efficiency, particularly by optimizing airflow and ventilation systems. Another compelling example is “Little Island” in New York, which is designed to resemble floating leaves and create a dynamic urban landscape. The design shows resilience to climate change, offering flexibility in adapting to changes in water levels.

As illustrated in these examples, bio-inspired designs in architecture go beyond mere esthetics by incorporating natural elements into urban facilities, often enhancing functionality. Specifically, as shown in Fig. 20, these designs have the potential to improve sustainability and energy efficiency by optimizing material usage and structural topology compared to conventional designs. For instance, the designs in row three reflect design simplicity and potential material saving, incorporating non-uniform cellular structures tailored for different space usages. The structural design also satisfies the load path analysis, with larger column sizes at lower floors compared to the top ones, which also shows the load-bearing capacity enhancement. Moreover, energy efficiency can be improved by optimizing heating and cooling systems, as seen in designs that mimic leaf veins, such as the first design in row one. The second design in row five demonstrates a bio-inspired roof design, with beam thickness inspired by leaf veins to maximize lighting and energy efficiency. Additionally, these designs enhance the user experience by integrating natural elements into urban facilities, such as bridges and pathways within the designs. However, to actualize these designs in real-life applications, further research is needed, which includes studying wind effects and façade analysis considering the integration of extended plants and ensuring the loading and structural integrity, as well as selecting materials that are compatible with greenery.

Furthermore, bio-inspired structures featuring cellular patterns have the potential to be further enhanced with topology optimization for various purposes, such as optimizing material usage while maintaining structural integrity and architectural features. We note that these bio-inspired designs reveal innovative design approaches for environmental integration and sustainability improvements, offering a promising start for exploring the vast design space, although more research is needed to fully validate the application in the real world.

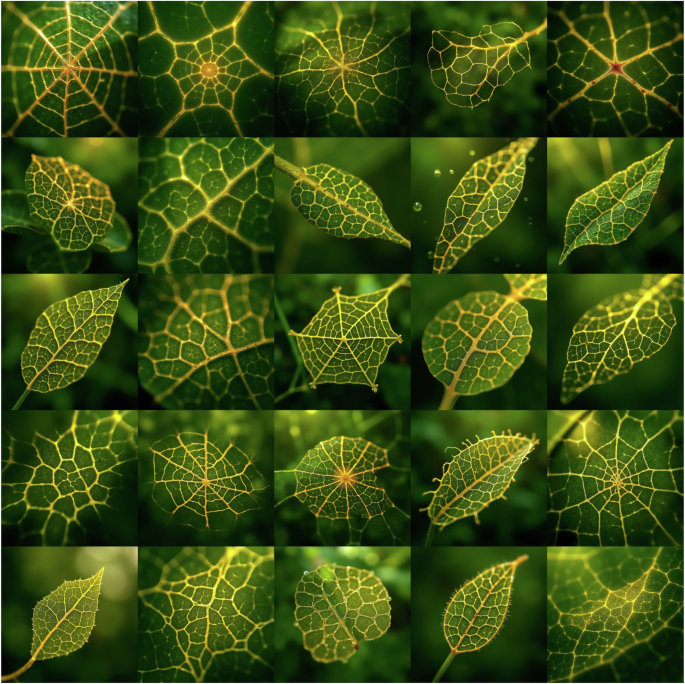

In our second example, we prompt the lamm-mit/mistral-7B-v0.3-Base-CPT-SFT-DPO model as follows (Fig. 21 shows the entire conversation):

lamm-mit/mistral-7B-v0.3-Base-CPT-SFT-DPO, aimed to develop an image generation prompt. The interactions include several stages, such as ideation and principle identification, the creation of the image generation prompt, and the solicitation of a short version of it.

A collection of images produced in this way is shown in Fig. 22. The images showcase close-up views of a novel form of a biological material, highlighting their intricate vein patterns and microstructures. The leaves exhibit a variety of geometric vein arrangements, ranging from polygonal to radial patterns, all sharply contrasted against the green leaf surfaces by bright yellow to gold veins. The diversity in leaf shapes and vein structures offers a variety of structural options, including a prominent feature of spider-web-like motifs. Several of the leaf patterns in the image resemble spider webs or spider-web-like structures. The veins in these particular leaves form radial patterns that converge toward a central point, much like the structure of a 2D orb spider web. This similarity is especially pronounced in some of the leaves where the vein network is more symmetrical and evenly spaced, creating a web-like appearance. These spider-web-like patterns, some of which resemble projections of various 3D webs such as cobwebs and sheet webs, add an interesting visual for studies related to natural design and biomimicry. A close inspection of the resulting images shows that textures and depth are captured in fine detail. We find that the soft yet focused lighting accentuates these patterns.

Generate an image of a golden spider-web network intertwined with collagen veins, forming a dynamic, leaf-inspired microstructure amidst a lush green background. The images show a novel design of leaf-like structures with prominent vein structures, some of which exhibit intricate spider-web-like patterns, all set on a green background.

Figure 23 shows a few additional sample images specifically prompting the model to develop urban design ideas based on a set of biological materials, including spider silk, collagen, and leaves, developed by lamm-mit/SmolLM-Base-1.7B-CPT-SFT-DPO. The images presented illustrate a conceptual approach to urban design that synthesizes advanced architectural techniques with principles of ecological integration and sustainability. The structures exhibit biomimetic design, characterized by their spiraling, organic forms that mimic natural patterns, possibly influenced by leaf microstructures. This design approach aligns with the principles of biophilic architecture44, which aims to reconnect urban environments with the natural world by incorporating natural elements into the built environment.

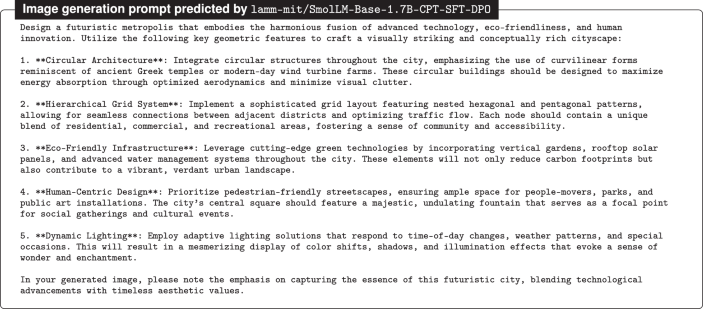

The images, generated through FLUX.1 [dev]43, illustrate conceptual urban designs integrating biomimetic architecture and ecological sustainability. The left image depicts spiraling vertical towers with embedded greenery, evoking natural patterns (generation prompt: Utilize the spiral geometry of a nautilus shell to construct a series of interconnected, curved towers that form the city’s skyline. The towers’ spiraling walls house lush greenery, generating a perpetual canopy of foliage that filters sunlight and provides shade. Atop each tower stands a sleek, aerodynamic dome housing a state-of-the-art research facility, promoting collaboration among scientists and innovators across disciplines). The center image showcases interconnected cylindrical structures through elevated walkways, enhancing both social and ecological connectivity (generation prompt: Imagine a cityscape where towering skyscrapers twist and curve along the dual axis grid, their rooftops adorned with lush greenery and shimmering solar panels. At the heart of each tower lies a vibrant, neon-lit hub – a Circular Economy Center – housing cutting-edge research and innovation in sustainable technologies. Connected by elevated walkways and hoverbikes, this city fosters a thriving ecosystem of collaboration and progress). The right image presents a broader urban layout, where domed buildings and landscaped water features are seamlessly integrated, reflecting a holistic approach to urban planning that prioritizes regeneration and biodiversity. The prompt used for this case is much longer and included as Box 1.

Several similar architectural designs have been realized, validating the potential for these generated concepts. Examples include Azabudai Hills, a district in Tokyo featuring curving planted rooftops; CapitaSpring, a skyscraper in Singapore with orthogonal strips of plants embedded in its façade; and the Vertical Forest, designed by Stefano Boeri Architetti, which integrates residential buildings with diverse greenery. However, there remains significant potential to explore and expand these ideas within extended urban systems, for instance, how to interconnect individual structures, ensuring seamless integration with other facilities and alignment with an overall urban planning strategy.

The integration of nature into architecture has roots in movements such as organic architecture, as advocated by Frank Lloyd Wright45, where the harmony between human habitation and the natural world is paramount. The designs proposed here, however, push this concept further by embedding extensive greenery directly into the architectural framework, creating vertical gardens and green terraces that are integral to the building’s structure rather than ancillary elements. Moreover, the buildings are interconnected through elevated walkways, which not only facilitate human movement but also promote ecological connectivity, potentially serving as corridors for urban wildlife and contributing to biodiversity. This interconnectedness suggests a systems-thinking approach to urban design, where the built environment is considered part of a larger ecological network rather than an isolated entity.

The design concepts represented in these images could perhaps be seen as a potential paradigm shift in urban planning, where we move beyond sustainability to focus on regenerative design. This approach aims to create urban environments that not only minimize ecological impact but actively restore and enhance the natural environment. Such a model could potentially represent a significant advancement in urban ecology, proposing a future where cities operate as living systems, integrated with and supportive of their surrounding ecosystems. More work would be necessary to explore this, but this example illustrates a use case where the methods developed here can guide creative research and technology developments.

link